Contact Info

133 East Esplanade Ave, North Vancouver, Canada

Expansive data I/O tools

Extensive data management tools

Dataset analysis tools

Extensive data management tools

Data generation tools to increase yields

Top of the line hardware available 24/7

AIEX Deep Learning platform provides you with all the tools necessary for a complete Deep Learning workflow. Everything from data management tools to model traininng and finally deploying the trained models. You can easily transform your visual inspections using the trained models and save on tima and money, increase accuracy and speed.

High-end hardware for real-time 24/7 inferences

transformation in automotive industry

Discover how AI is helping shape the future

Cutting edge, 24/7 on premise inspections

See how AI helps us build safer workspaces

Nowadays, incurred costs as a result of fire accidents are constantly increasing due to global warming. In the oil, gas, and petroleum industries, such accidents often lead to the loss of products, equipment damage, or worker injuries. If detected early, fire incidents can be avoided or effectively contained. Unlike indoor environments, outdoor fires cannot be detected with a smoke or flame detector as they need proximity to the fire source and large amounts of smoke to function properly. Additionally, the traditional method of using cameras and human operators to detect fires is not effective since a large number of cameras installed in working sites cannot be efficiently monitored by operators. Thus, engineers and industries are considering intelligent machines to detect fire incidents.

Dr. Jinsong Zhao et al. from the department of chemical engineering at Tsinghua University in China proposed a novel, automatic, and intelligent fire detection approach using computer vision for factories with high risk of fire incidents. Employing a computer vision approach in such industries can considerably enhance safety and profit margin by detecting fire at a very early stage, facilitating assistance, and preventing huge losses in human lives, properties, products, etc.

Historically speaking, fire detection via computer vision has been implemented in two stages including color models and hand-designed features in which the color and shape of flames have been recognized by intelligent machines. However, these models were not trustworthy. In 2012, the famous ImageNet Large Scale Visual Recognition Challenge (ILSVRC) competition was held, and using a deep convolutional neural network (CNN) model, AlexNet won the championship. CNN models were first introduced in the late 1980s, to replace the traditional hand-designed feature descriptors such as scale invariant feature transform (SIFT), histogram of oriented gradient (HOG), and local binary pattern (LBP). After their initial introduction, CNN-based models revolutionized computer vision and pattern recognition. Computer vision includes three core objectives, i.e., image classification, object detection, and instance segmentation. Among them, image classification has been thoroughly investigated using the ImageNet dataset.

In 2016, a CNN model was employed for fire and smoke detection, exhibiting better performance compared to other similar methods. Next year, a novel approach using a combination of CNN and support vector machine (SVM) model achieved better performance compared to the pure CNN model. In the same year, another approach based on AdaBoost, LBP, and CNN, was introduced to detect fires. Although this method showed great performance, the location of the fire or a small fire in a large image could not be detected due to background pixels. Moreover, all the above-mentioned models cannot effectively distinguish many objects similar to fire in real applications, leading to a considerable number of false alarms.

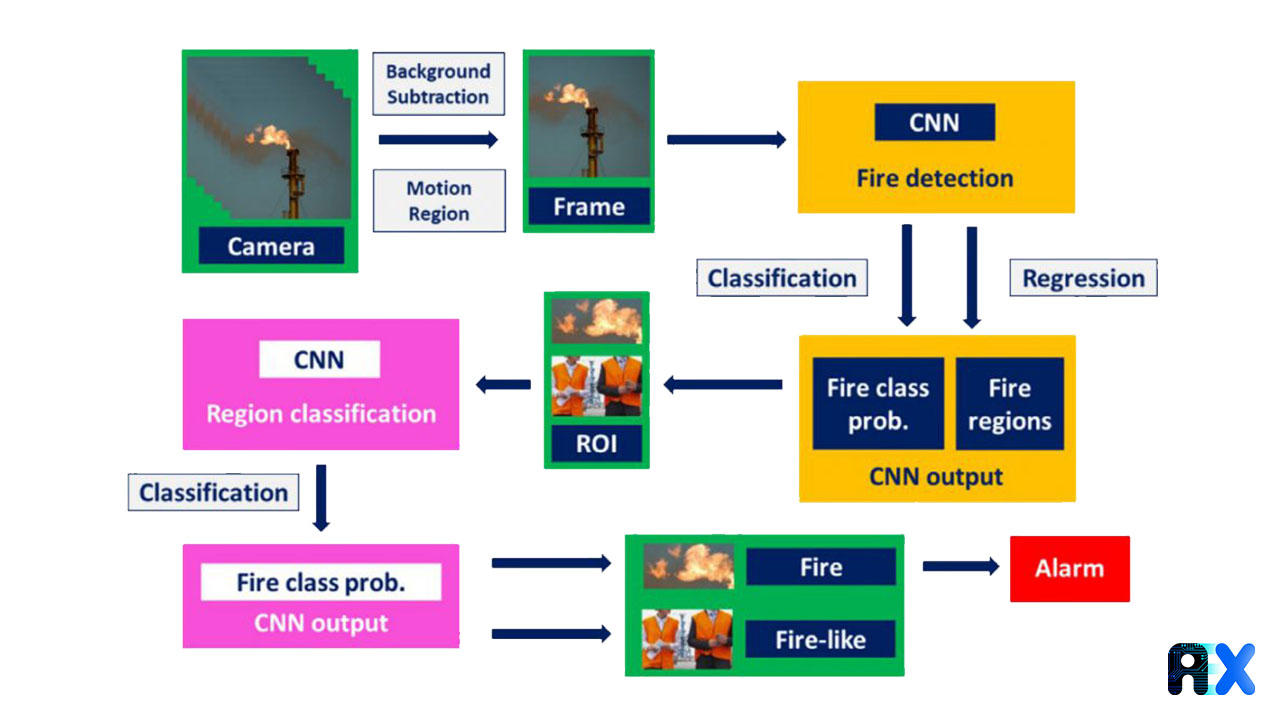

The method devised by Zhao et al. aims to detect fire with a high accuracy rate, low false alarm rate, and rapid detection. In fact, they combined both image classification and object detection methods to detect fire. The overall suggested procedure includes three major steps: Motion detection, Fire detection, and Region classification (Figure 1).

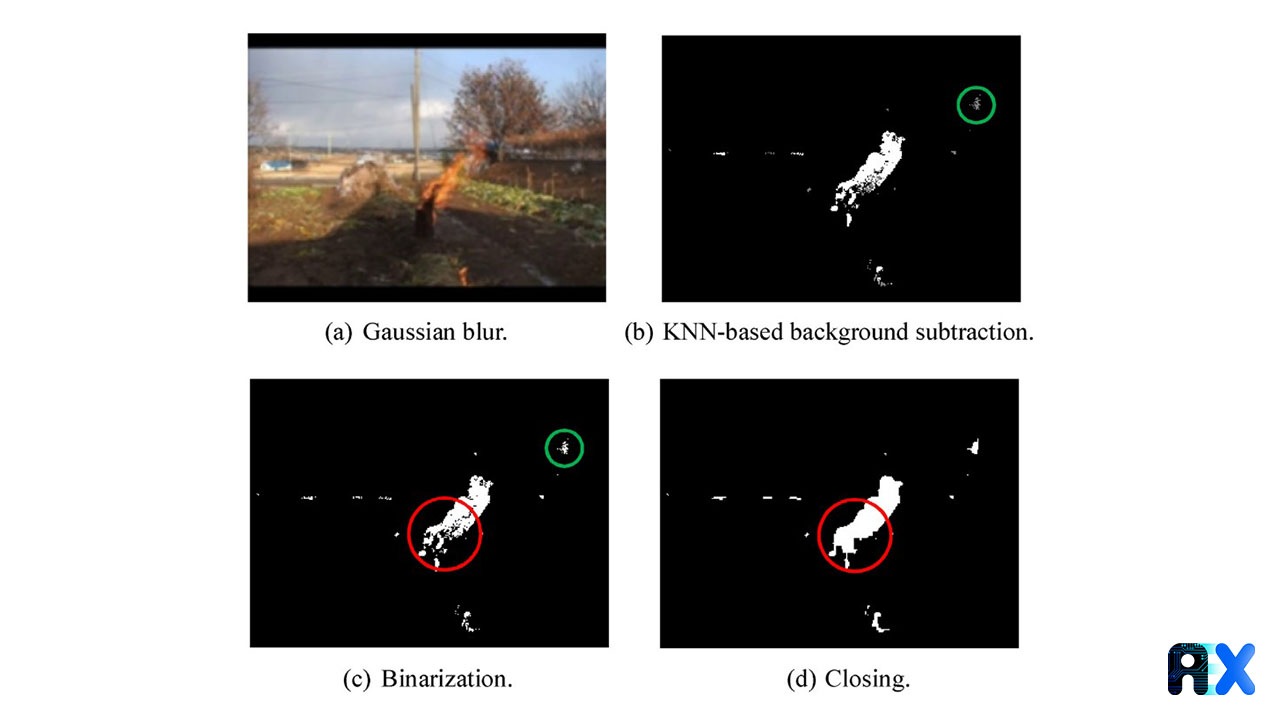

In the first step, footage from surveillance cameras is processed to subtract the background using OpenCV, an open-source computer vision library, and detect moving objects instead of using the CNN computation, which is quite resource hungry. Only if moving objects are detected, the next steps will be executed (Figure 2). The background subtraction includes four steps: First, eliminate image noise with the Gaussian blur method (Figure 2a); second, capture the moving object (e.g. fire or humans) using a KNN-based model (Figure 2b); third, convert gray regions to white using a binarization method (Figure 2c); and in the end, combine little discrete regions to create a uniform region using the closing operation (Figure 2d). During the whole process, the size of the white region is calculated and if it exceeds the determined threshold, subsequent steps will be executed.

In the Next step, the fire region is recognized using a CNN-based fire detection model instead of using the hand-designed feature extractors or the slide window methods for the region of interest (ROI) generation. The input and output of this model are images and predictive labels, respectively. There are two categories for object detections, i.e., one-stage and two-stage methods. Methods from the first category, like YOLO (You only look one), SSD, etc., approach the task as a regression problem. The latter category methods are based on region proposals, such as R-CNN, Fast R-CNN, Faster R-CNN, etc. The main differences between these two methods are the needed time and consumed computing resources, which are significantly higher in two-stage methods. Thus, one-stage methods work much faster but with slightly lower accuracy compared to the two-stage methods. Dr. Zhao et al., employed a YOLO method for object detection (Figure 1).

However, there is a possibility that some objects similar to fire will be incorrectly labeled as fire regions. To prevent false alarms, they used a region classification model to check in real-time if the recognized region is really on fire or not. An alarm will be sent to safety technicians as soon as a fire is confirmed, giving them important details such as the location of the fire and its size, to help extinguish the fire faster (Figure 1).

The entire fire image dataset contains 5075 images with various widths and heights. The training set is 80%, i.e. 4060 images and 1015 images are used as the testing set. Each image may include one or several fire regions annotated by a bounding box (Figure 3).

To evaluate the performance of a model, detection accuracy and speed should be considered simultaneously. Usually, Average Precision (AP) and Frames Per Second (FPS) are representative of detection accuracy and speed, respectively. The results indicate an AP of 98.7% in a big dataset for fire detection in real applications.

The authors conclude that their model exhibits three improvements compared to other CNN-based classification approaches: (1) The developed model can be efficiently used for practical application in chemical factories and other industries with a high risk of fire incidents. (2) The model can detect fire in real-time with excellent precision and speed. (3) Using this model in industries can help detect fire at a very early stage, facilitating emergency management and preventing loss of human lives and properties.

You can enter your email address and subscribe to our newsletter and get the latest practical content. You can enter your email address and subscribe to our newsletter.

© 2022 Aiex.ai All Rights Reserved.