Contact Info

133 East Esplanade Ave, North Vancouver, Canada

Expansive data I/O tools

Extensive data management tools

Dataset analysis tools

Extensive data management tools

Data generation tools to increase yields

Top of the line hardware available 24/7

AIEX Deep Learning platform provides you with all the tools necessary for a complete Deep Learning workflow. Everything from data management tools to model traininng and finally deploying the trained models. You can easily transform your visual inspections using the trained models and save on tima and money, increase accuracy and speed.

High-end hardware for real-time 24/7 inferences

transformation in automotive industry

Discover how AI is helping shape the future

Cutting edge, 24/7 on premise inspections

See how AI helps us build safer workspaces

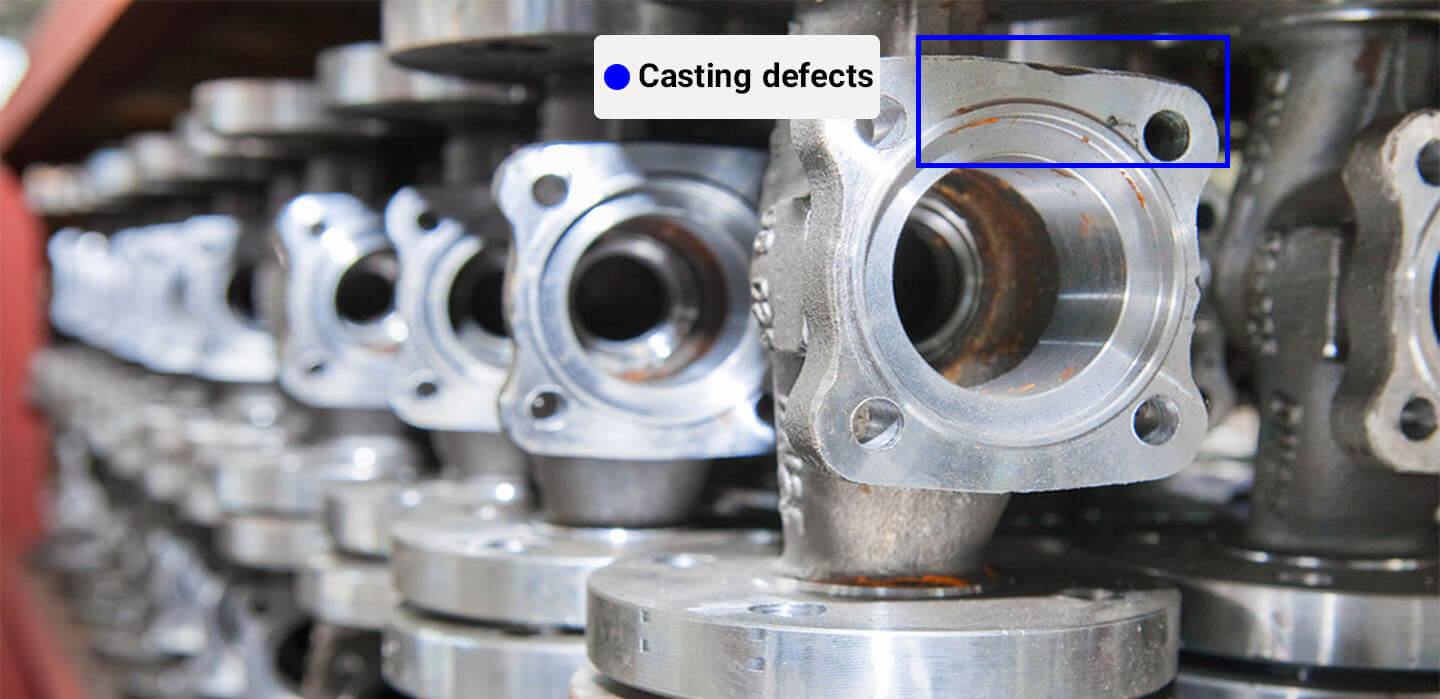

for Low-alloy aluminum parts are widely used in various industries, especially the automotive industries. Cracks, bubbles, slags, or inclusions must be thoroughly identified in parts such as wheels, knuckles, gear boxes, etc. to guarantee high-quality products. One quality control solution for these products is using X-rays, because of their ability to penetrate objects and detect internal defects of parts invisible to the naked eye (Figure 1). Computer vision (CV) models can be extremely effective in automating quality control processes for these type of products.

Recently, professor Domingo Mery from the department of computer science at the Pontifical Catholic University of Chile published a peer-reviewed paper in the journal of “Machine Vision and Applications”. In his paper, he develop and compare eight state-of-the-art deep object detection methods (based on YOLO, RetinaNet, and EfficientDet) to detect aluminum casting defects. A simple, effective, and fast training strategy proposed by the author, is to superimpose simulated defects on a low number of defect-free X-ray images of castings to avoid manual annotations.

Quality control automation using CV has been developed for over three decades. Nowadays, CV methods based on deep-learning algorithms are pushing the limits even further. The key focus of deep-learning algorithms is on substituting handcrafted features by implementing a hierarchical feature extraction approach, such that the learned features are discriminative without using any sophisticated classifiers.

The author notes that applying CV-based models in industrial defect detections using X-ray images has been quite slow due to the following reasons:

To address the these shortcomings, the author suggests the following solutions:

Although novel object detection models such as YOLO and RetinaNet have been widely developed in the recent years, it is still challenging to apply them for the defect inspection in aluminum castings due to the lack of training datasets. To tackle this issue, simulated defects can be superimposed into the original X-ray images.

All object-detection models used by industries or academia can be categorized into four groups: (i) classic methods, (ii) methods based on multiple views, (iii) methods based on computed tomography, and (iv) methods based on deep learning (More details about each group can be found in the reference). As novel object-detection models exhibit the best performance, the author use eight different modern object-detection algorithms for training the models. Additionally, two baseline classic models based on handcrafted features (CLP-SVM based on handcrafted features and SVM classifier) and Xnet based on convolutional neural networks (CNN) are utilized for comparison.

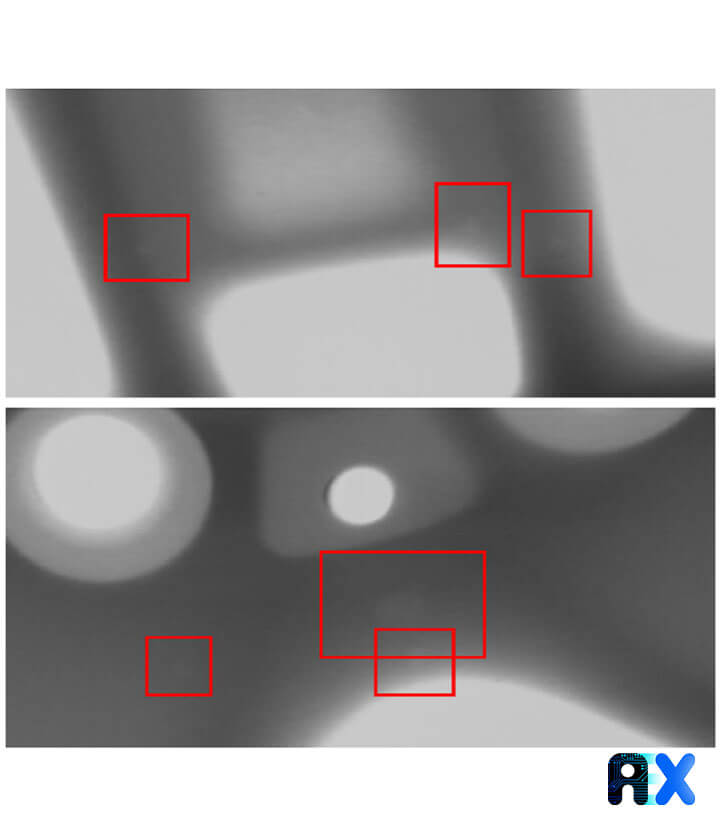

Applying CV-based models consists of using image classification and object detection. Image classification can assign an X-ray image to one class and typically be applied when there is only one object to detect per image. For example, when a small sub-image of an X-ray image with a patch of 32 × 32 pixels exists, the trained model can classify it as ‘defective’ or ‘not defective’. object detection models can recognize more objects in an X-ray image and the location of each recognized object can be identified through a bounding box surrounding the detected object (Figure 2).

Applying the simple sliding-window methodology for defect detection in aluminum castings based on image classification suffers from huge number of patches and different patch-sizes, which may make the computational time prohibitive. Generally, two main approaches can be used to overcome this problem: (i) detection in two stages, and (ii) detection in one stage. The latter approach mostly outperforms the first approach in terms of both accuracy and speed. Therefore, only the second approach is discussed in detail.

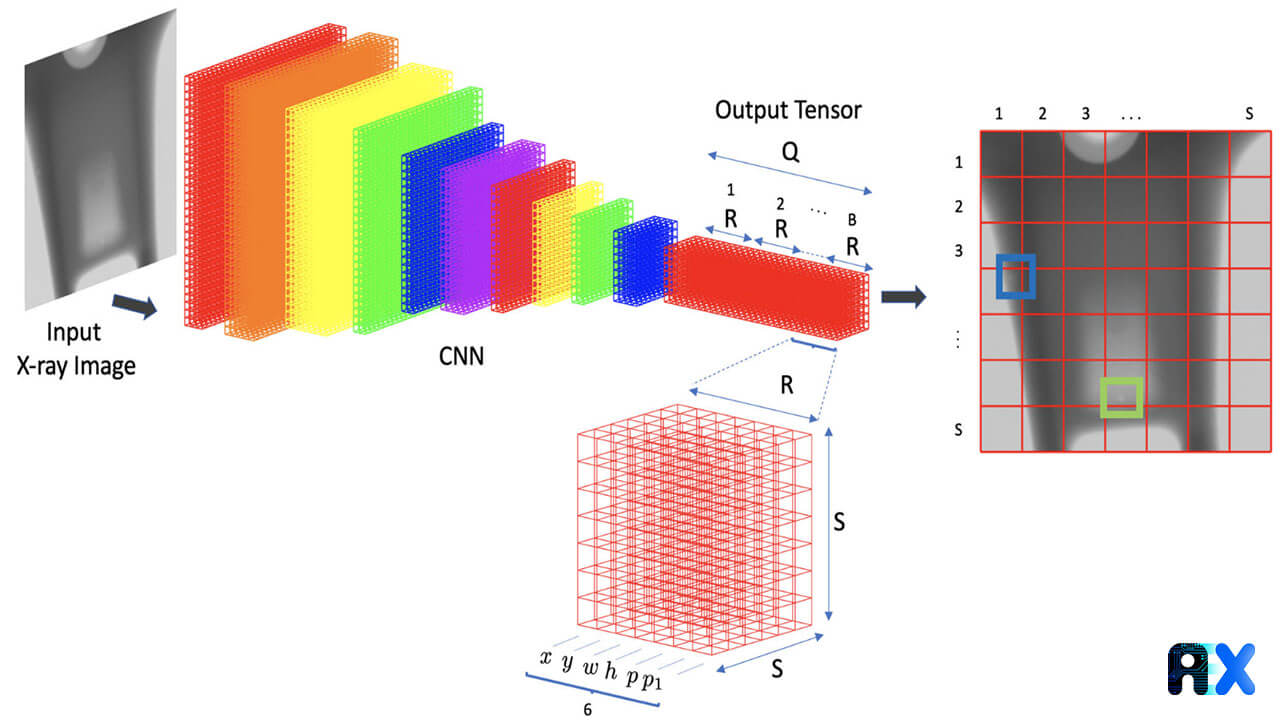

These approaches use a single CNN to train a model effectively and quickly on both location and classification. This means that bounding box prediction and subsequent estimation of the their class probabilities occur in one stage. This category includes YOLO (v3 (versions SPP and Tiny), v5 (versions ‘s’, ‘l’, ‘m’ and ‘x’)), EfficientDet, and RetinaNet. Since YOLO manifests the best performance in this case, only this algorithm is discussed in this paper.

YOLO is a single CNN model that goes over an image only once and simultaneously performs bounding box prediction (localization) and category probability estimation (classification) for objects of interest. Since the input image is processed in a single pass by the CNN, the prediction speed is excellent. More details about the YOLO algorithms and various versions can be found here.

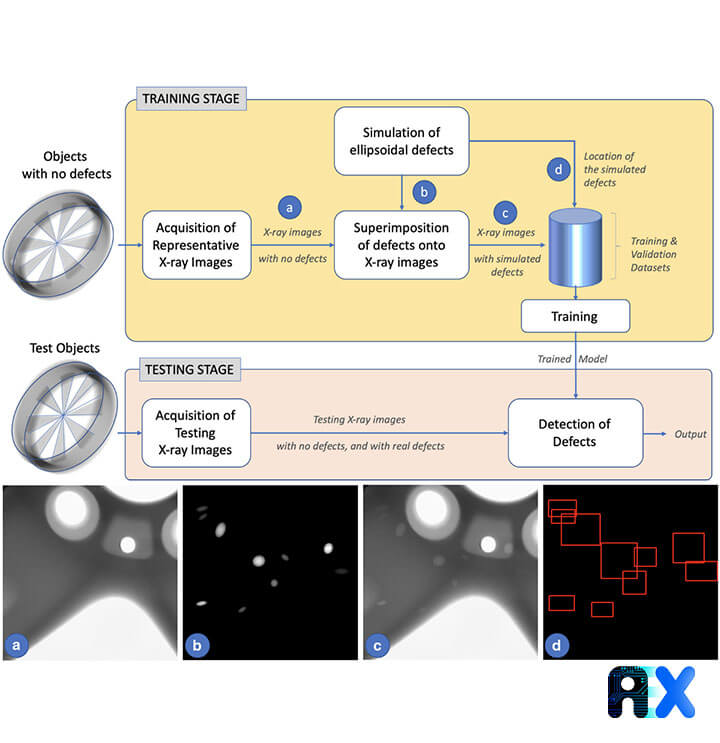

Figure 3 depicts the overall workflow for detecting defects in aluminum castings using X-ray images. Like other CV-based models, it consists of two stages: training and testing the model. To train a model, real X-ray images of aluminum castings with only simulated defects are used because defects in real samples are rare. On the other hand, for the testing dataset, real X-ray images of aluminum castings with real defects are utilized to evaluate the model in a real scenario.

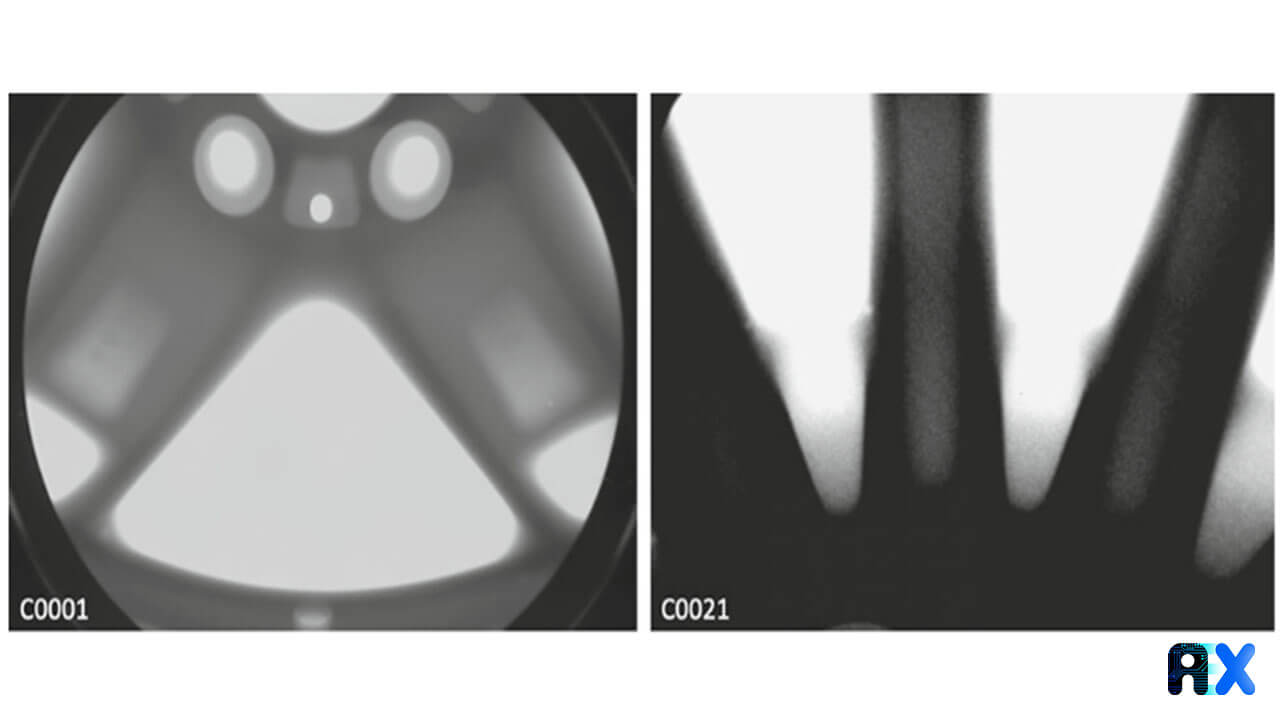

GDXray dataset, series C0001 (aluminum wheel) is used for the main experiments with 72 X-ray images, and series C0021 is used for an additional experiment with 37 X-ray images. Both series have an annotated ground truth including real defects.

The following procedures must be performed before training and validating models:

The author simulated ellipsoidal defects to train the defect detection model for aluminum casting. The simulation details were described in the paper and readers can refer to it for more information.

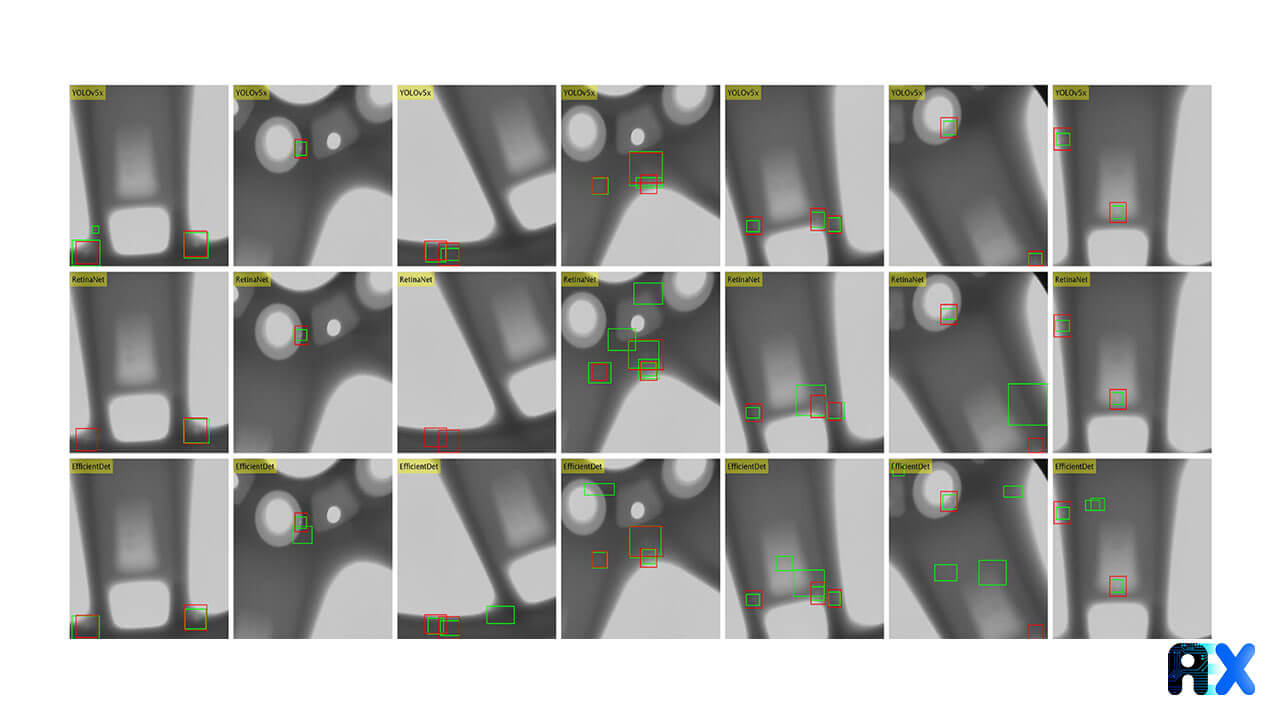

Figure 4 illustrates the results for some of the testing datasets. The YOLO-based model shows superior performance compared to other models.

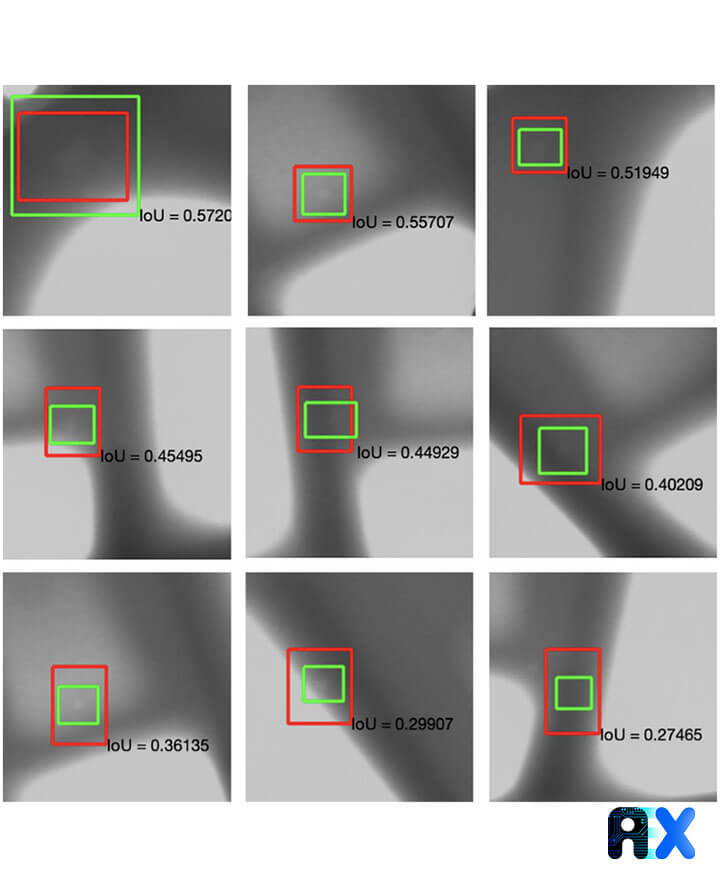

The Intersection over Union (IoU) score is calculated to evaluate the performance of the detectors. The criterion establishes that a defect is detected if IoU > α, where α is called the IoU-threshold. The performance of each model is evaluated for α =1/10 , 1/5 , 1/4 , 1/3 , 1/2. Figure 5 displays the bounding boxes along with IoU at α = 1/4.

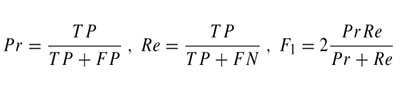

From the IoU-criterion, the statistics of true positives (TP), false positives (FP), and false negatives (FN) can be obtained to calculate the precision (Pr), recall (Re), and F1 values using the following equations:

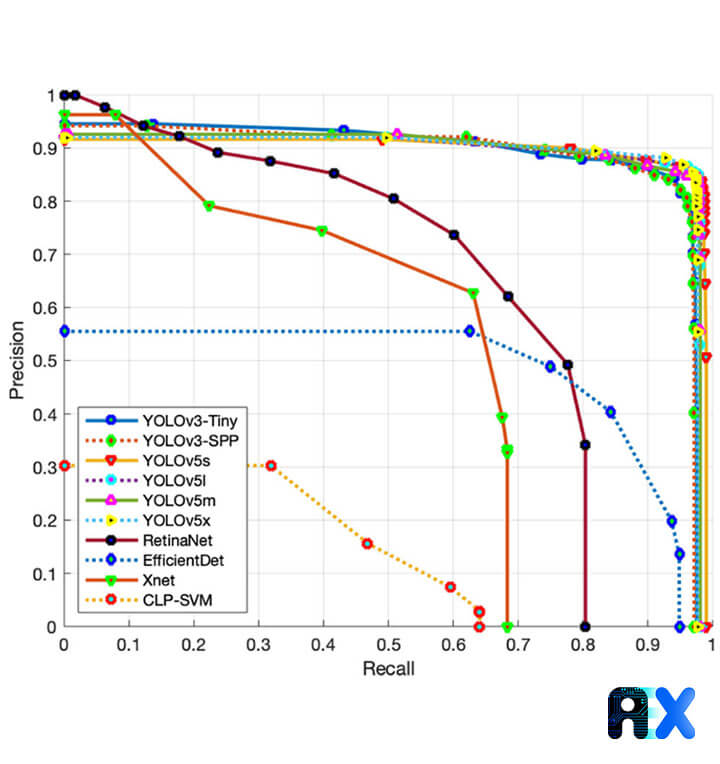

Figure 6 illustrates the precision-recall curve for α = 0.25.

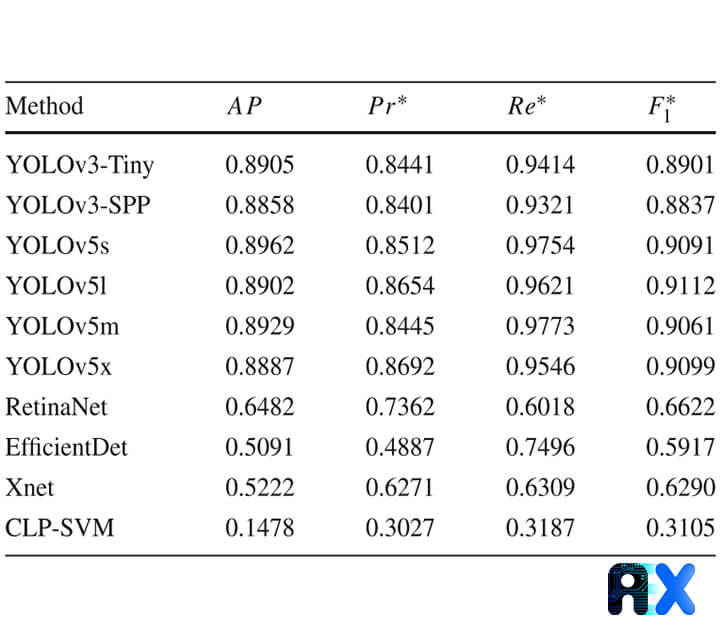

Table 1 presents and compares Pr∗ and Re∗ values at the maximum F1 value (F1∗) for each trained model. The average precision (AP) is also calculated by integrating the area under the precision-recall curve. Table 1 gives the AP value for α = 1/4. According to AP values, three distinct groups can be recognized: (i) YOLO-based methods with AP > 0.88, (ii) RetinaNet, EfficientNet, and Xnet with AP = 0.5–0.65, and (iii) CLP-SVM with AP < 0.15. It is obvious that YOLO-based models exhibit much better performance compared to the baseline methods (Xnet and CLP-SVM) with YOLOv5-methods outperforming every other methos. On the other hand, RetinaNet and EfficientDet show poor performance as they are designed for large objects.

Table 1 The performance evaluation for α = 1/4.

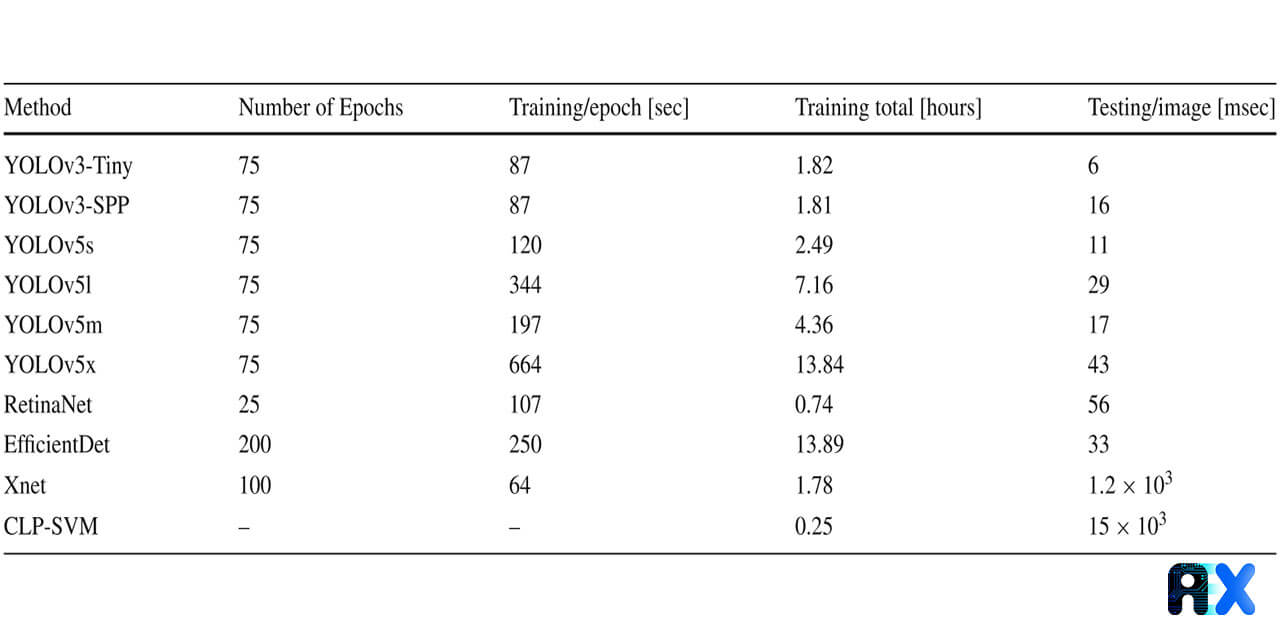

Table 2 presents and compares the computation time for each trained model. According to the computation time, the trained models can be categorized in two groups: (i) modern object detection methods taking only a few tens of milliseconds per image (ii) baseline methods taking more than 1 sec per image.

Table 2 Computation time for each trained model.

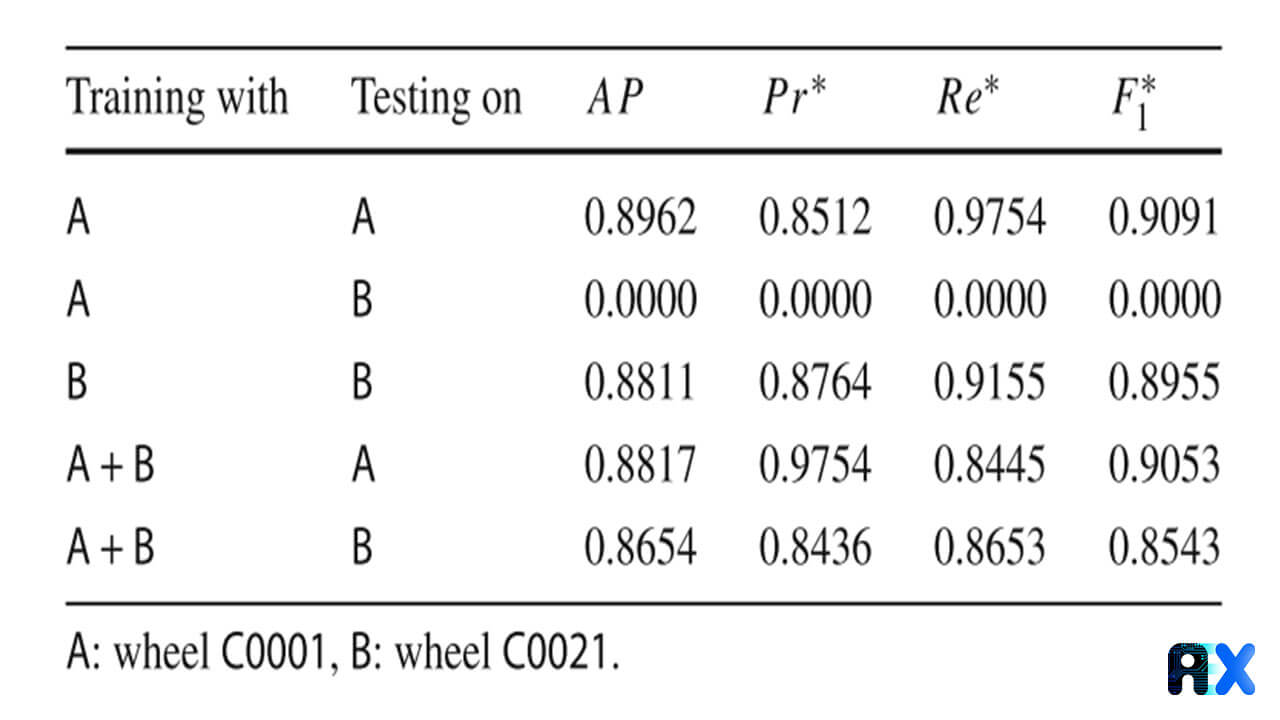

The most problematic issue of the proposed method is that each casting type needs an ad-hoc trained model, i.e. if the model is trained on specific wheel type images and the trained model tests on X-ray images of another wheel type, it may not perform well. Figure 7 shows two different wheel types.

As the model is trained on the regular structures of one casting type, the discussed issue is predictable. However, if we train a new model for each specific wheel type, the model’s performance is satisfactory (Table 3).

Table 3 Evaluation of YOLOv5s on two wheel-types.

To deal with this shortcoming, we can train a single model for each casting type. Nonetheless, this solution will not work when a new casting type is tested by the model. In addition, the computational time of this new training is higher as a larger training dataset is used. The effectiveness of the model on all included casting types must be evaluated too. For these reasons, the author recommends training a specific model for each casting type. As the training process is relatively short (only 2.5 hours), this should not pose a problem for the industry.

YOLOv5 models achieved the best evaluation metrics. At α = 0.25, 97.54% of all existing defects were correctly detected (recall), and 85.12% of all detections were true positives. Additionally, YOLOv5 models were trained in just 2.5 hours, and the computational time in testing stage was only 11 ms per testing image (90 images per second). Hence, the proposed approach by the author could satisfy the requirements of the industry in following regards:

In the future, random defect shapes used in simulation of other kind of defects such as cracks can be applied, which is very useful in the automated inspection of welds.

If you need more information about how AIEX experts can help you and your business, feel free to contact us.

You can enter your email address and subscribe to our newsletter and get the latest practical content. You can enter your email address and subscribe to our newsletter.

© 2022 Aiex.ai All Rights Reserved.