Contact Info

133 East Esplanade Ave, North Vancouver, Canada

Expansive data I/O tools

Extensive data management tools

Dataset analysis tools

Extensive data management tools

Data generation tools to increase yields

Top of the line hardware available 24/7

AIEX Deep Learning platform provides you with all the tools necessary for a complete Deep Learning workflow. Everything from data management tools to model traininng and finally deploying the trained models. You can easily transform your visual inspections using the trained models and save on tima and money, increase accuracy and speed.

High-end hardware for real-time 24/7 inferences

transformation in automotive industry

Discover how AI is helping shape the future

Cutting edge, 24/7 on premise inspections

See how AI helps us build safer workspaces

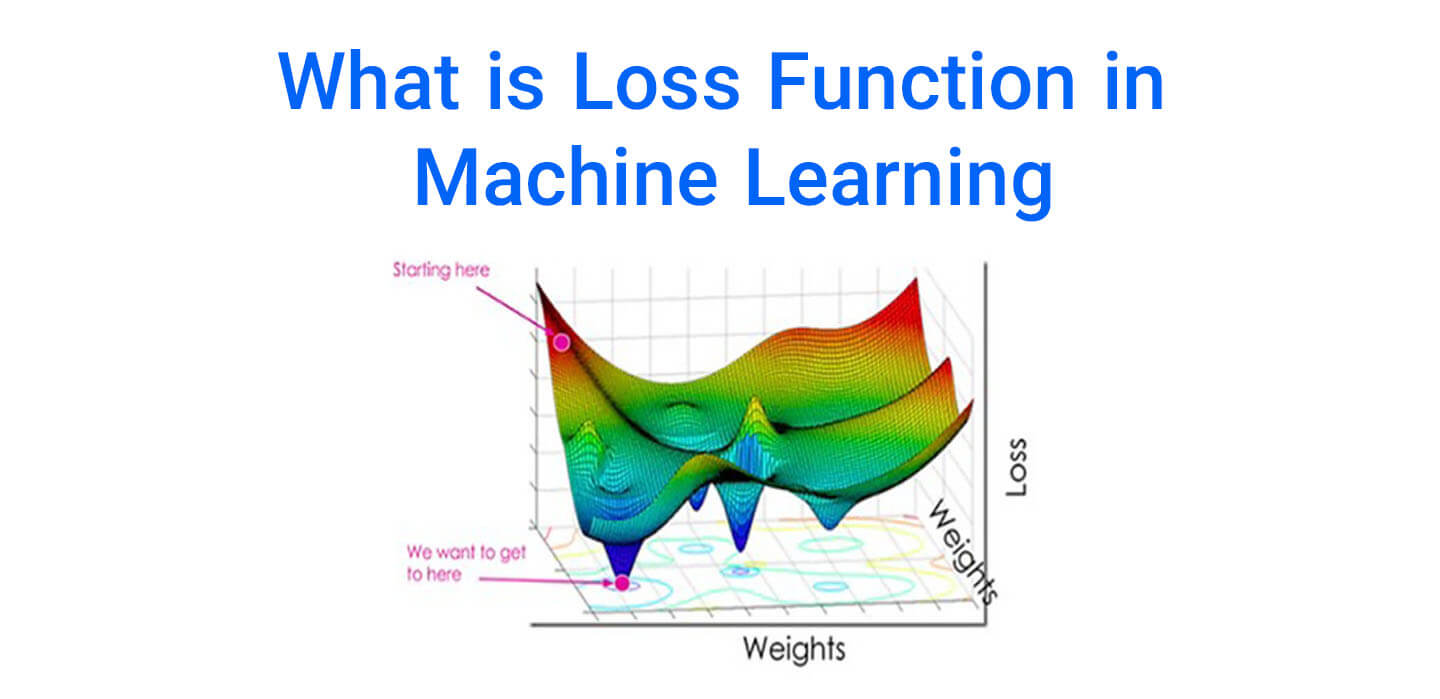

The majority of machine learning algorithms work by minimizing or maximizing an ‘objective function‘. Loss Functions are a group of objective functions that are supposed to be minimized. These functions are sometimes referred to as “cost functions” in artificial intelligence. Using the loss function, we can evaluate the ability of the model to predict new values.

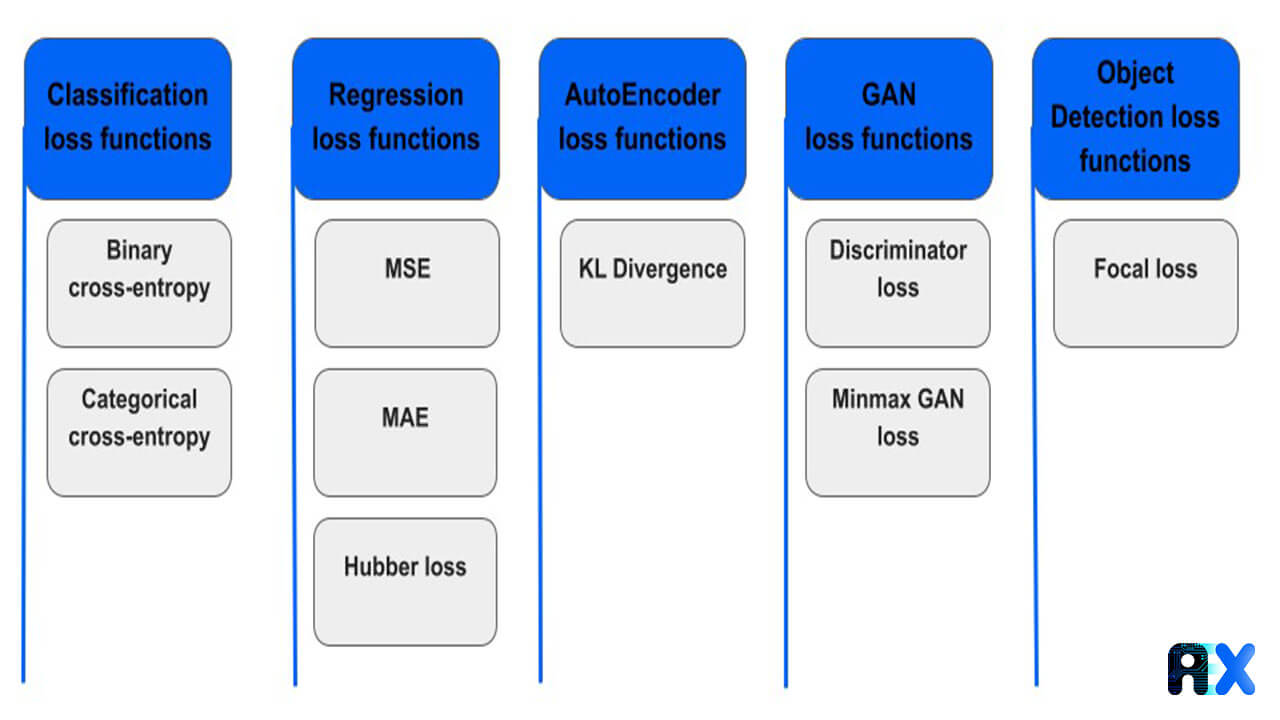

There are two main types of loss functions in machine learning:

In this section, we discuss the loss functions used in regression models.

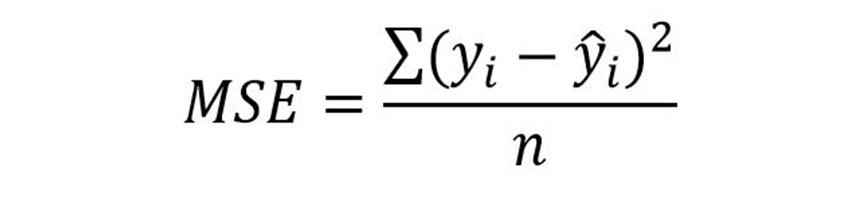

MSE is one of the most famous and commonly used loss functions in regression analysis. For this loss function, the mean square of the difference between the predicted and actual values is calculated:

MSE uses the squared values to create a loss functions. Therefore, the loss function is parabolic for prediction (or error) values. Its advantages are being easy to understand and having only one local minimum. its disadvantages include not being robust to outliers.

Mean Absolute Error, or MAE, is another loss function with interesting properties. This loss function, like MSE, uses the difference between the predicted and actual value as a criterion but does not take into account the direction of the difference. Therefore, MAE calculates the mean absolute value of the differences between the predicted and actual values. MAE advantages include its intuitiveness, ease of use, and robustness to outliers. Its main disadvantage is that we can not directly use gradient descent, and have to rely on sub-gradient calculations.

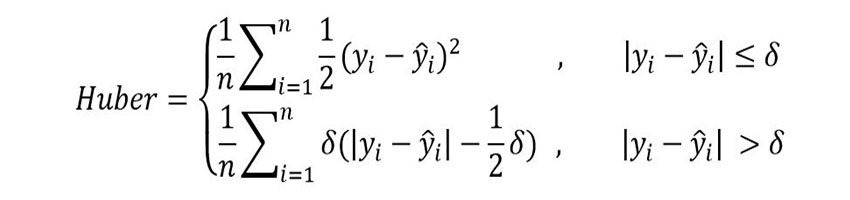

Huber loss function is less affected by outliers than MSE. Also, unlike MAE loss function, minimization is easily achievable. Since different values of δ in the Huber loss equation change the shape of the loss function, choosing the right value for it is a sensitive and difficult task. If δ is too large, this loss function will be the same as the MSE loss function. If δ approaches zero, the Huber loss function approaches the MAE loss function.

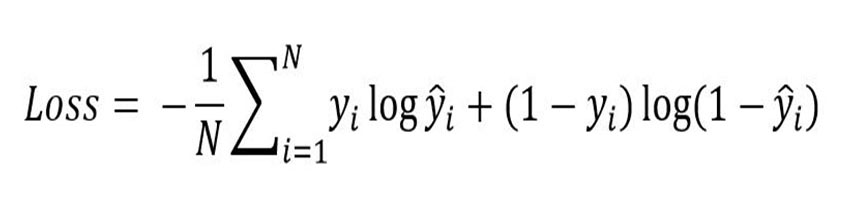

In binary classification problems we are working with two classes. In binary cross-entropy, each of the predicted probabilities is compared to the actual class output, which can be either 0 or 1. A score is then calculated based on the distance from the expected value that penalizes the probabilities and shows how close or far the predictions are from actual values.

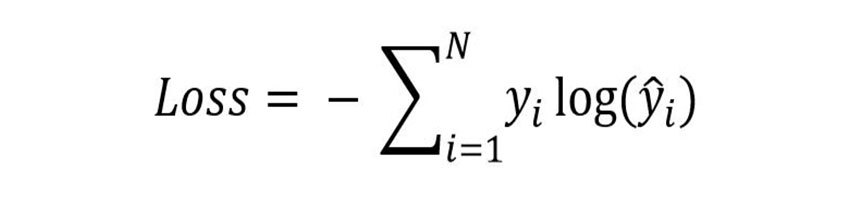

The categorical cross entropy is used in multi-class classification problems, as well as softmax regression. It can be calculated as follows:

Bishop, Christopher M. Neural networks for pattern recognition. Oxford university press, 1995.

LeCun, Yann, Yoshua Bengio, and Geoffrey Hinton. “Deep learning.” nature 521.7553 (2015): 436-444.

You can enter your email address and subscribe to our newsletter and get the latest practical content. You can enter your email address and subscribe to our newsletter.

© 2022 Aiex.ai All Rights Reserved.