Contact Info

133 East Esplanade Ave, North Vancouver, Canada

Expansive data I/O tools

Extensive data management tools

Dataset analysis tools

Extensive data management tools

Data generation tools to increase yields

Top of the line hardware available 24/7

AIEX Deep Learning platform provides you with all the tools necessary for a complete Deep Learning workflow. Everything from data management tools to model traininng and finally deploying the trained models. You can easily transform your visual inspections using the trained models and save on tima and money, increase accuracy and speed.

High-end hardware for real-time 24/7 inferences

transformation in automotive industry

Discover how AI is helping shape the future

Cutting edge, 24/7 on premise inspections

See how AI helps us build safer workspaces

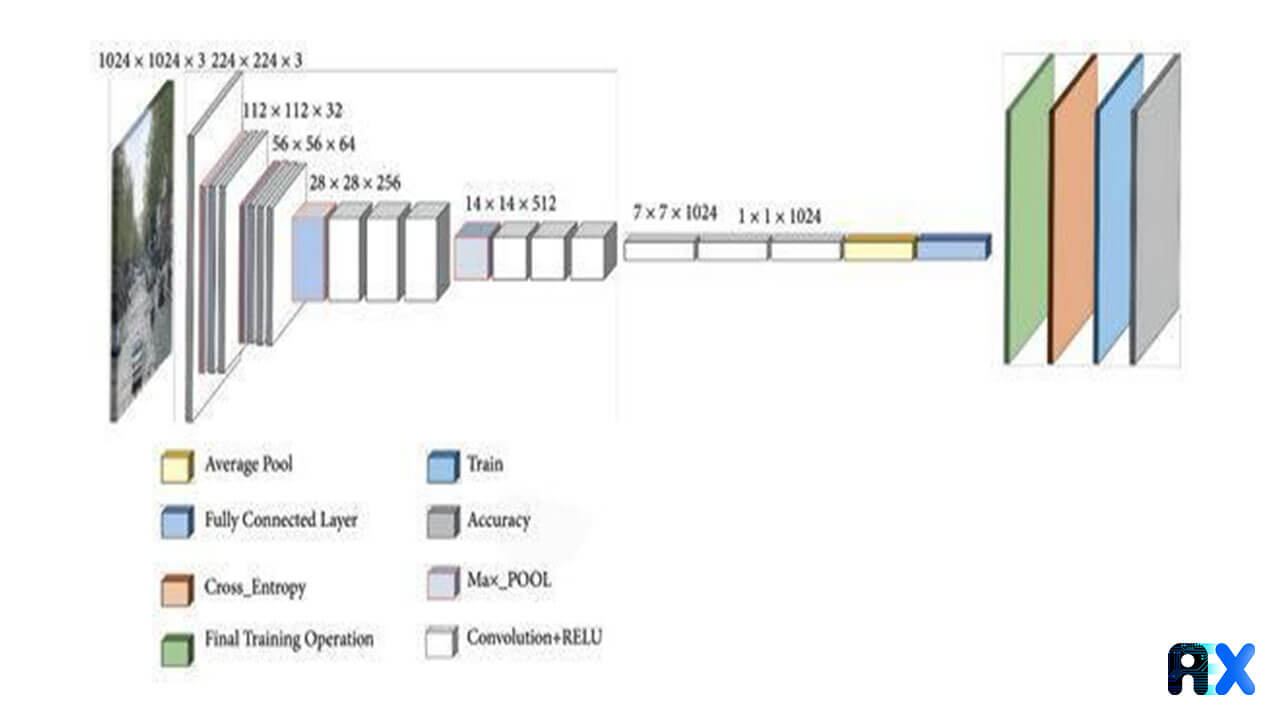

One of the main steps in computer vision is the extraction of features. In the past, feature extraction was performed using conventional algorithms or filters applied to input data for further processing. With the introduction of deep learning techniques, neural networks have become more powerful and can process a larger amount of data with less effort. The development of convolution neural networks (CNN) makes it possible to work on large-scale data they are also great for feature extraction. Selecting CNNs for feature extraction or other parts of a deep learning model is not a random process. The complexity of the target task, as well as the model’s implementation, will greatly affect the outcome. Some proposed networks have become the default option in a variety of data analysis domains. A deep learning model’s backbone is responsible for feature extraction using these networks. In this section, we’ll have an overview of the most prominent backbone networks, implemented on the AIEX platform for deep learning.

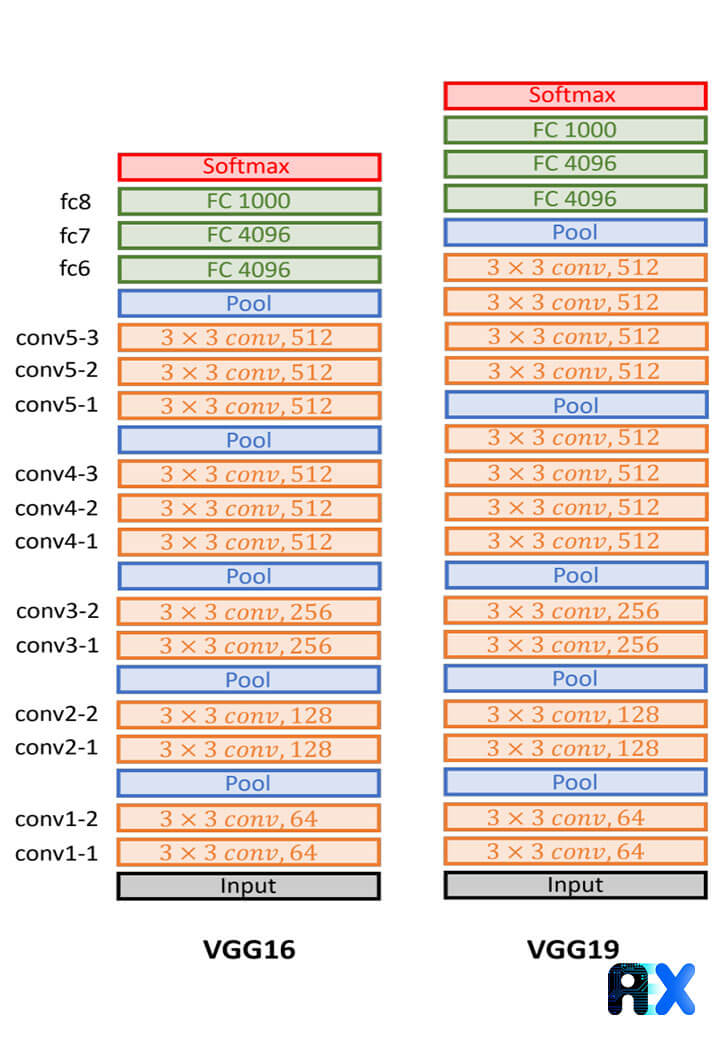

One of the most famous backbones in computer vision and computer science is the VGG family, which includes VGG-16 and VGG-19. VGGs have proven to be effective in many tasks, including image classification, object detection, and segmentation. In addition, they are widely used as the backbone (for feature extraction) in many other models, such as R-CNN and Faster R-CNN. VGG-16, one of the fundamental deep learning backbones developed in 2014, consists of 16 layers including 13 convolutional layers, 5 max-pooling layers, and 3 fully connected layers, in addition to ReLU for activation. A VGG architecture has eight more layers than an AlexNet architecture, and contains 138 million parameters. VGG-19 is a deeper version of the VGG-16, it has 144 million parameters and contains 3 more layers with 16 convolutional layers, 5 max-pooling layers, and 3 fully connected layers.

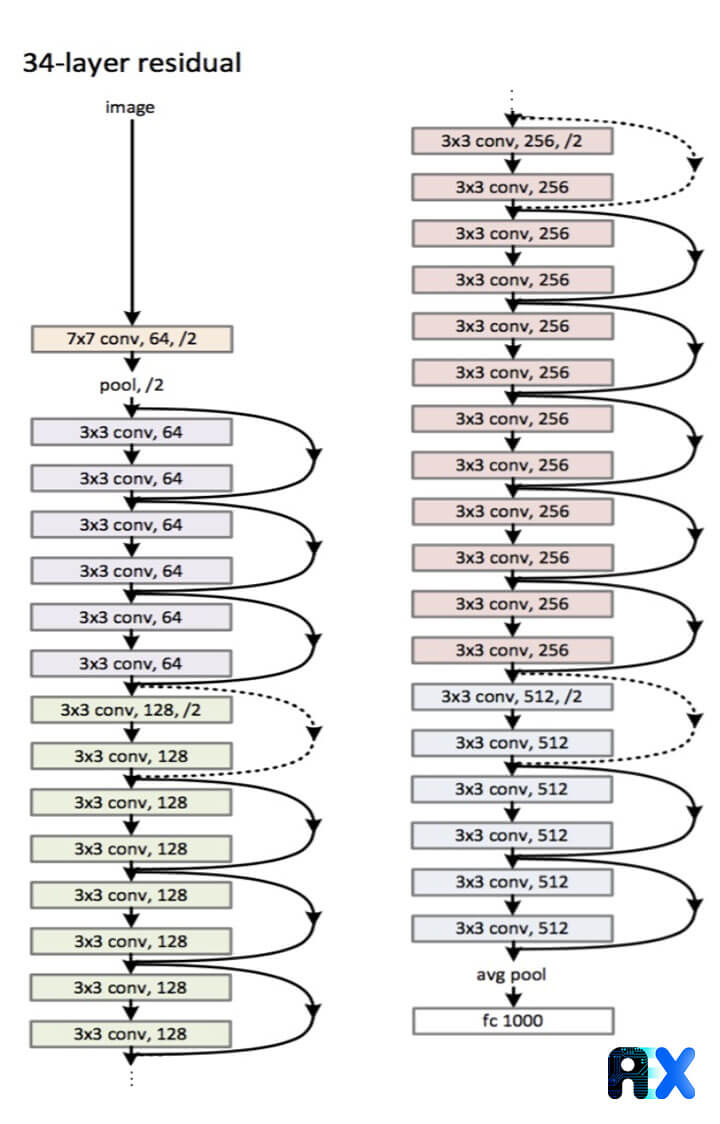

Residual Neural Network (ResNet) is a CNN-based neural network that contain some skip-connections or recurrent units between blocks of convolutional and pooling layers. Also, the block is followed by batch normalization. Similar to the VGG family, ResNet has many versions including ResNet34 and ResNet-50 with 26M parameters, ResNet-101 with 44M, and ResNet-152 which is even deeper with 152 layers. The ResNet-50 and ResNet-101 are widely used for object detection and segmentation. The ResNet architecture is also used as the backbone for other deep learning architectures such as the Faster RCNN and the R-FCN.

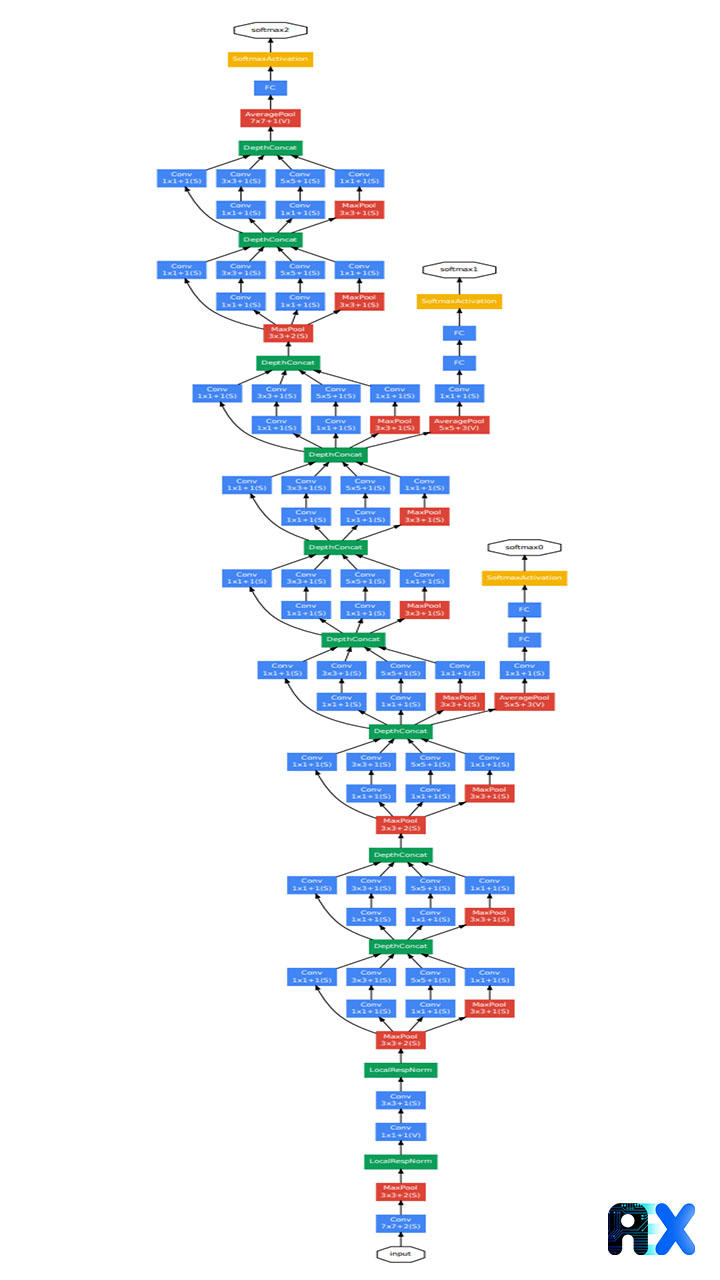

One of the most widely used convolutional neural networks is Inception-V1 or GoogleNet, which is developed based on inception blocks. There are convolution layers in each block, and the filters used range from 1×1, 3×3, to 5×5, allowing for multiscale learning. Various filter sizes make the variation of dimension between blocks possible. GoogleNet, AlexNet, and VGG use global average pooling instead of Max-pooling. Figure 3 illustrates the architecture of Inception-v1.

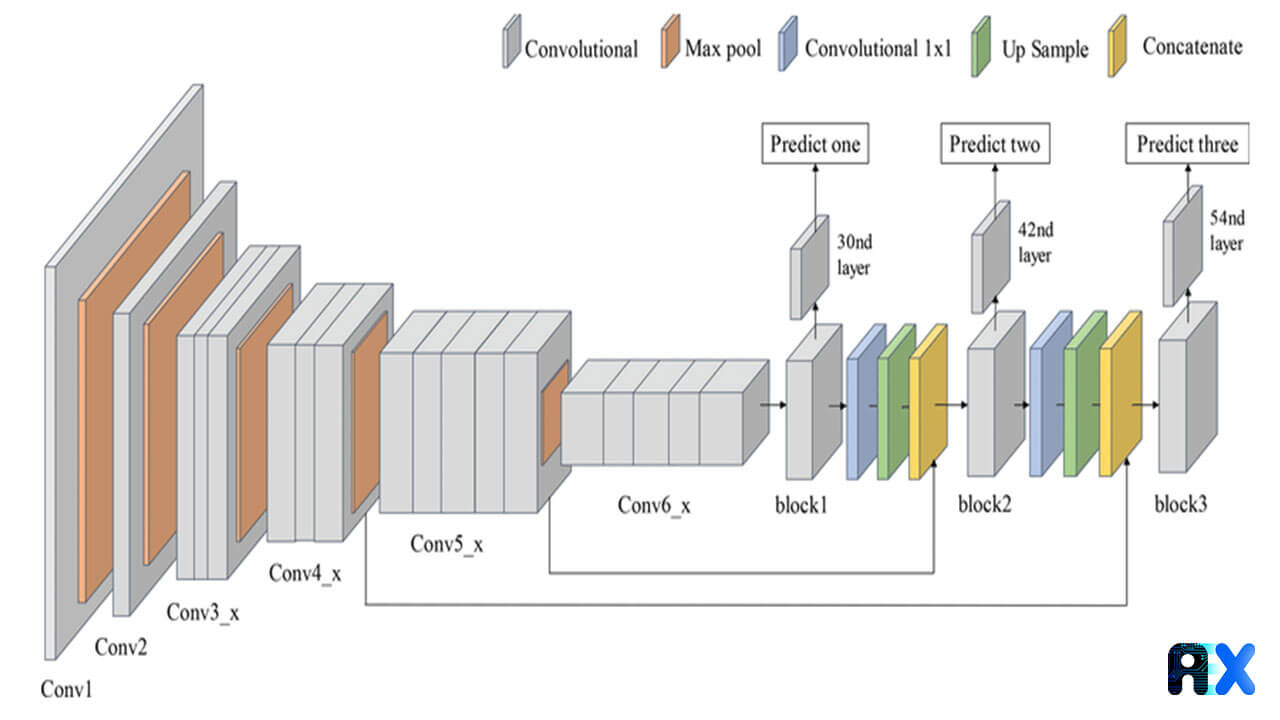

Darknet-19 architecture,developed based on existing notions like inception and batch normalization is ideal for developing small and efficient networks. The DarkNet network is made up of convolutional-max-pooling layers, while the DarkNet-19 consists of 19 convolutional layers. The number of parameters is reduced by using a set of 1 × 1 convolutional kernels, while 3 × 3 convolutional kernels are not used much like in VGG of ResNet. DarkNet-19 is used in many object detection algorithms, including YOLO.

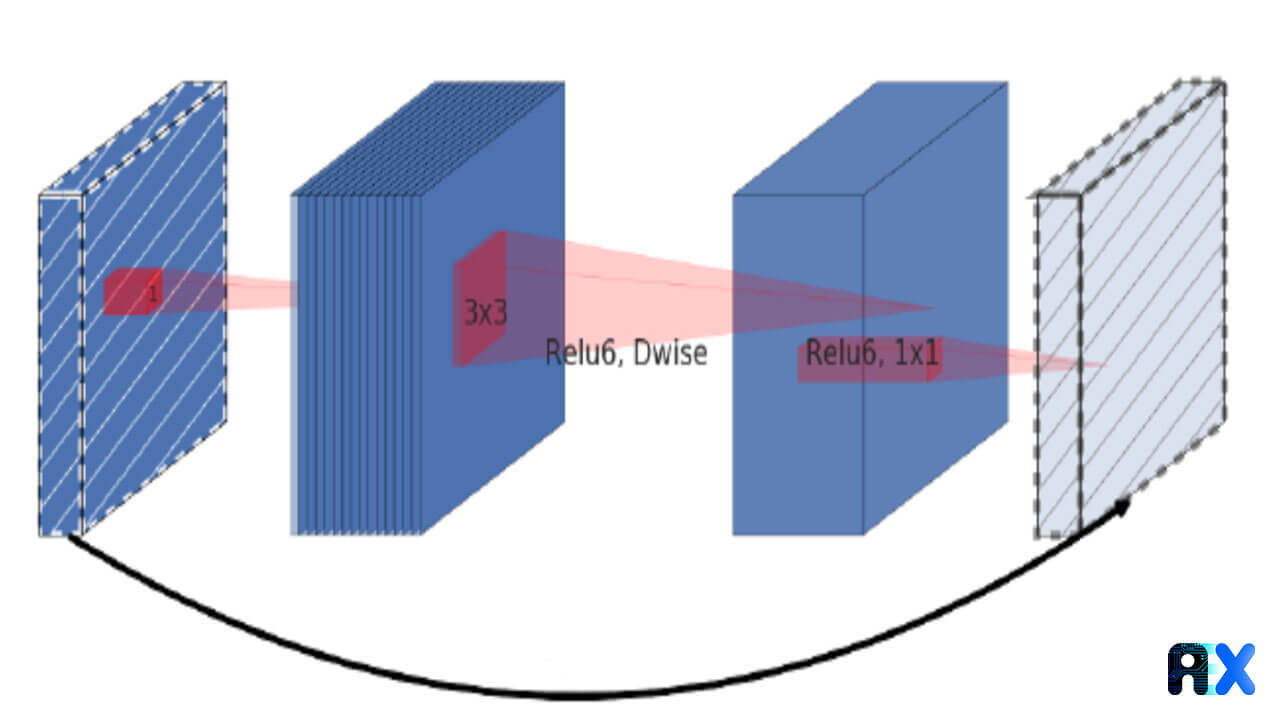

The MobileNet model has been developed as a deep learning model for low-performance machines like mobile phones. MobileNet’s architecture uses depth-wise separable convolutions and is considered a lightweight model. Two global hyper-parameters are introduced to help developers select the best model size for their problem. ImageNet is used to train MobileNet for image classification. An alternative version of MobileNet, MobileNet-v2, has been developed for object detection. A new residual block was introduced in this model, allowing a direct shortcut connection between bottleneck layers, as illustrated in Figure 5. Depth-wise separable convolutions are used in this version to filter features.

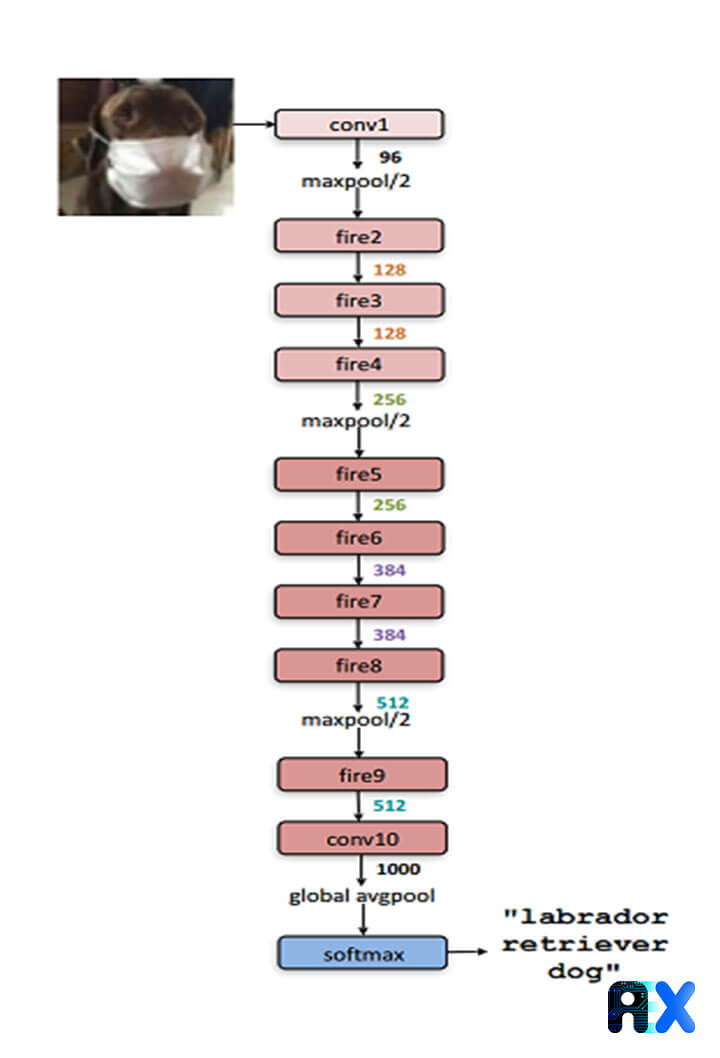

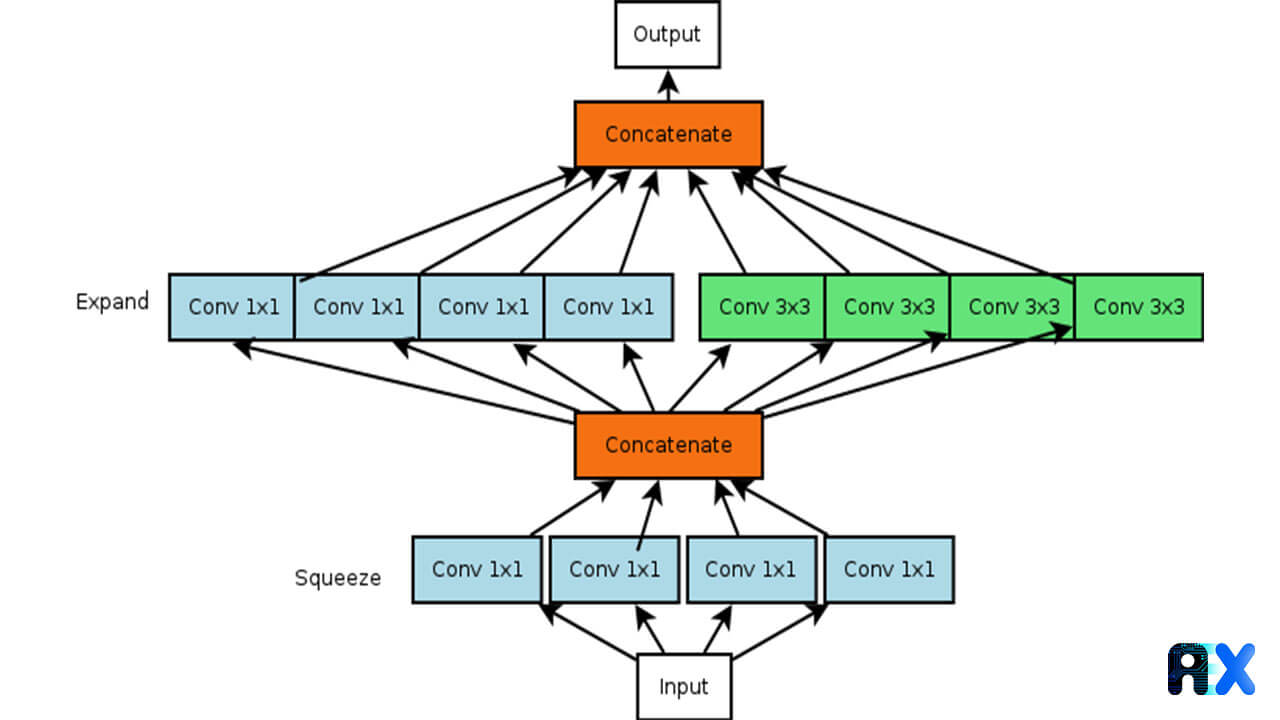

SqueezeNet was developed to reduce the number of parameters, it consists of a 1 × 1 filter in almost all layers instead of a 3 × 3 filter since with 1×1 filters the number of parameters is 9times lower. Also, the input channels are decreased to 3 × 3 filters, and delayed down-sampling of large activation maps leads to higher classification accuracy. SqueezeNet layers are a set of consecutive fire modules. Each Fire module consists of a squeeze convolution layer (with only 1×1 filters), feeding into an expand layer with a mix of 1×1 and 3×3 filters. SqueezeNet’s architecture employs the same method as ResNet for connecting layers or fire modules. According to SqueezeNet’s developers, it contains 1.24M parameters, which makes it a small backbone in comparison to other networks.

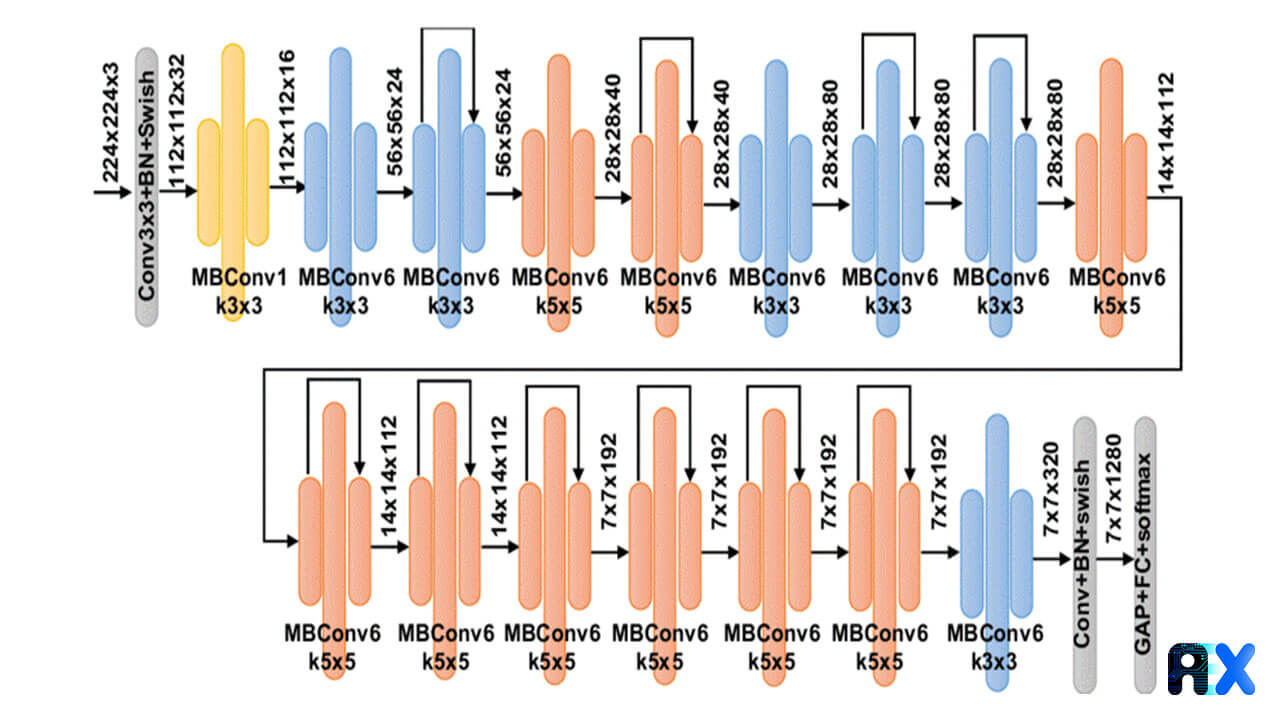

EfficientNet Networks, a new family of architectures, have significantly outperformed other networks in classification tasks with fewer parameters and more floating point operations per second (FLOPs). Compound scaling was implemented in order to uniformly scale the network’s width, depth, and resolution. Compared to the best existing networks, EfficientNet parameters are 8.4x smaller and 6.1x faster on inference. There are several versions of EfficientNet, starting with B0 and ending with B7. Depending on the availability of resources and the computational cost, any of the EfficientNet models can be used.There are 5.3 million parameters in EfficientNet-B0, while EfficientNet-B7 contains66 million parameters.

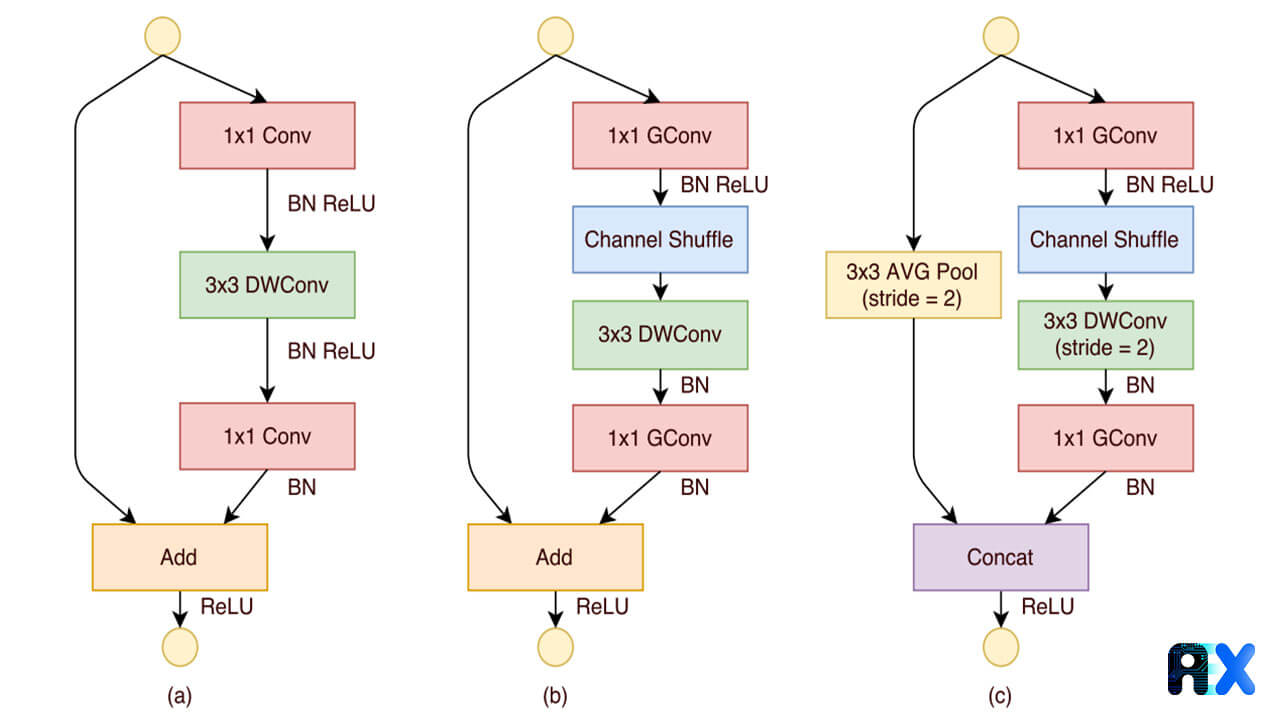

ShuffleNet is a computation-efficient CNN architecture developed for mobile devices with limited computational power with the goal of reducing computation costs while conserving accuracy. ShuffleNet consists of point-wise group convolution and channel shuffle notions. The group of depth-wise separable convolutions has been introduced in AlexNet for distributing the model over two GPUs, and also used in ResNeXt to demonstrate its robustness. ShuffleNet uses point-wise group convolution to reduce the computation complexity of 1× 1 convolutions. group of convolution layers can affect the accuracy of the network, for that, the authors of ShuffleNet used channel shuffle operation to share the information across feature channels.ShuffleNet also comes in two versions and has been widely used for task otehr than image classification and object detection.

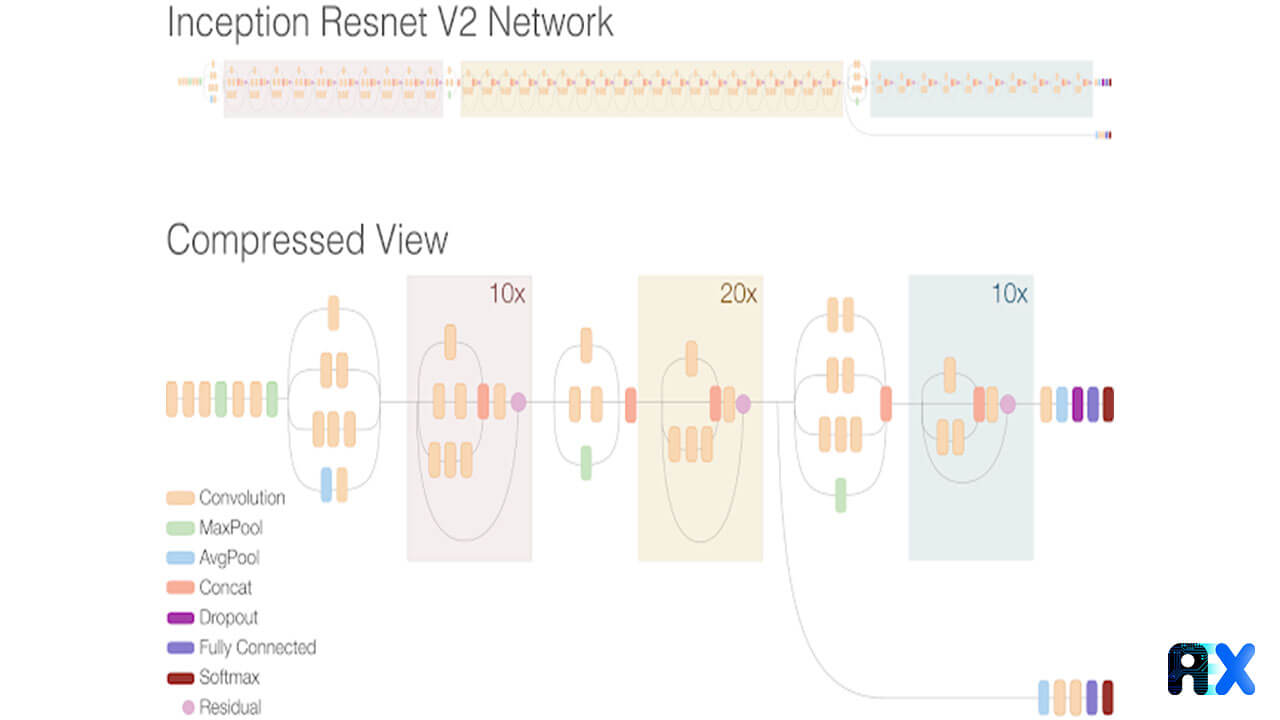

Inception-ResNet was introduced by combining the architectures of ResNet and inception. Inception-ResNet uses residual connections (skip connections between blocks of layers), and is composed of 164 layers of 4 max-pooling and 160 convolutional layers and about 55 million parameters. The Inception-ResNet network has achieved higher accuracy and better performance at shorter epochs. InceptionResNet is used by many other architectures, such as Faster R-CNN and Mask R-CNN.

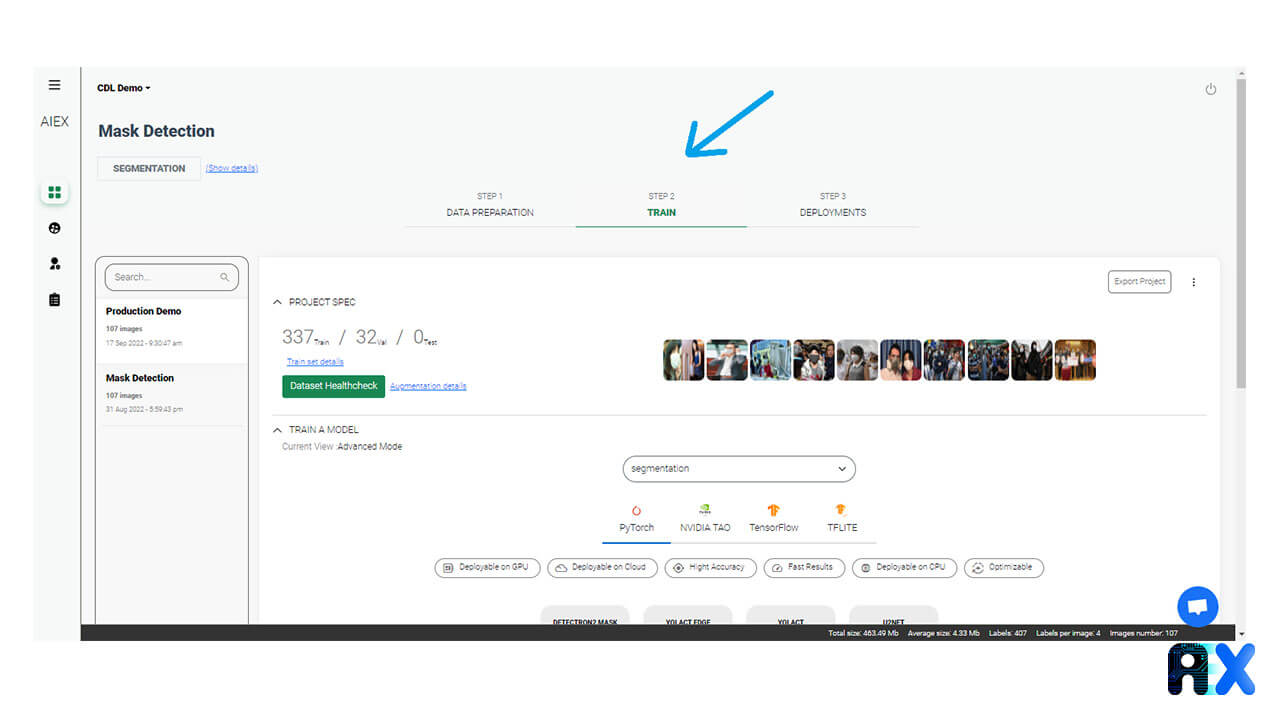

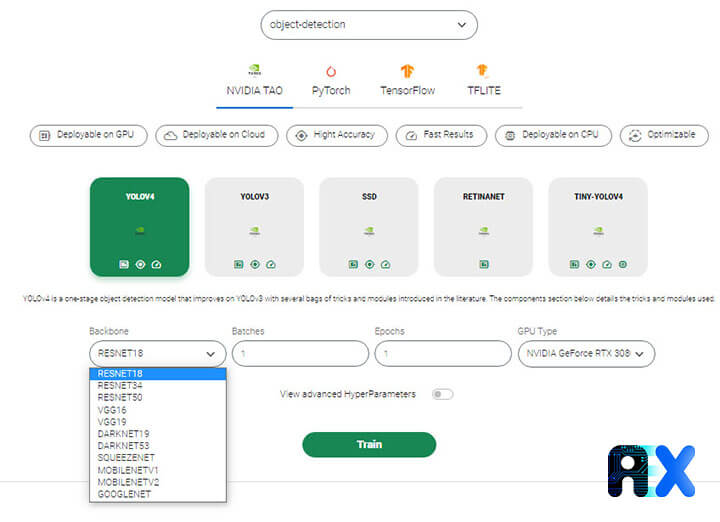

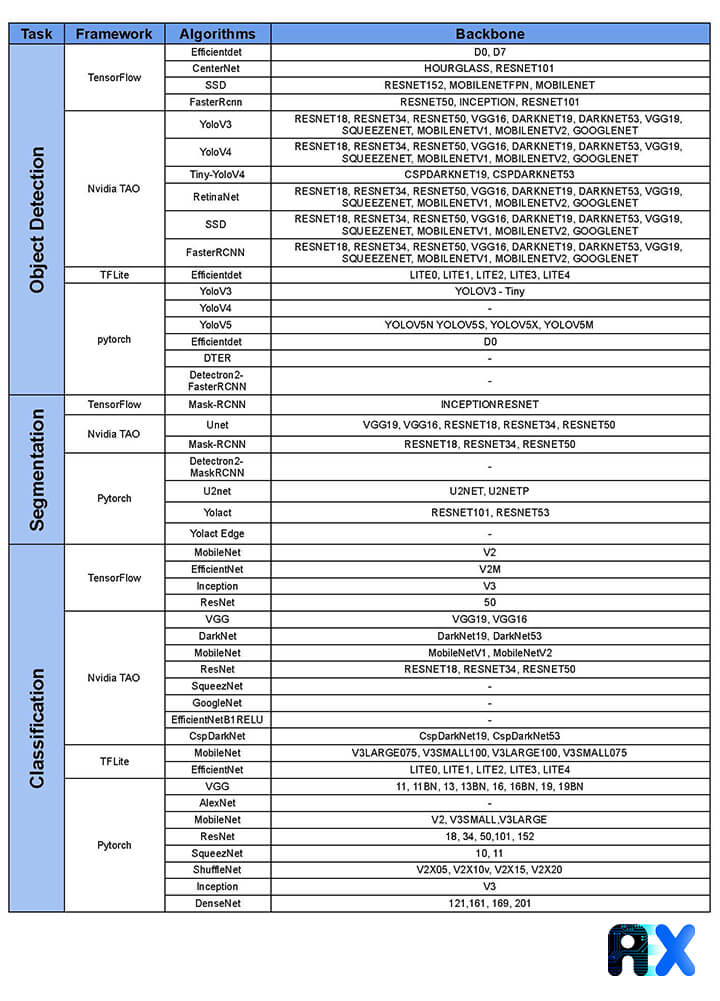

Each algorithm on the AIEX platform uses a different backbones. We start by preparing the data on the platform, the second step is training the model. we choose our desired framework from Pytorch, Tensorflow, Nvidia TAO, and TFLite, and select the desired algorithm in that framework as shown in Figure 13. Select the backbone algorithm in the backbone section. Table 1 shows all the backbones networks available on the AIEX platform for classification, segmentation, and object detection.

Table1. The backbone for all algorithms and frameworks used by AIEX

https://github.com/pytorch/vision/blob/master/torchvision/models/vgg.py

https://github.com/pytorch/vision/blob/master/torchvision/models/vgg.py

https://github.com/pytorch/vision/blob/master/torchvision/models/resnet.py

https://github.com/Lornatang/GoogLeNet-PyTorch

https://github.com/weiaicunzai/pytorchcifar100/blob/master/models/inceptionv3.py

https://github.com/zhulf0804/Inceptionv4_and_InceptionResNetv2.PyTorch

https://github.com/visionNoob/pytorch-darknet19

https://github.com/kuangliu/pytorchcifar/blob/master/models/shufflenet.py

https://github.com/pytorch/vision/blob/master/torchvision/models/squeezenet.py

https://github.com/tensorflow/tfjs-models/tree/master/mobilenet

https://arxiv.org/abs/2206.08016

https://arxiv.org/abs/1707.01083v2

https://arxiv.org/abs/1409.4842v1

https://arxiv.org/abs/1512.03385

https://arxiv.org/abs/1409.1556

https://arxiv.org/abs/1905.11946

https://arxiv.org/abs/1804.02767v1

https://arxiv.org/abs/1707.01083

You can enter your email address and subscribe to our newsletter and get the latest practical content. You can enter your email address and subscribe to our newsletter.

© 2022 Aiex.ai All Rights Reserved.