Contact Info

133 East Esplanade Ave, North Vancouver, Canada

Expansive data I/O tools

Extensive data management tools

Dataset analysis tools

Extensive data management tools

Data generation tools to increase yields

Top of the line hardware available 24/7

AIEX Deep Learning platform provides you with all the tools necessary for a complete Deep Learning workflow. Everything from data management tools to model traininng and finally deploying the trained models. You can easily transform your visual inspections using the trained models and save on tima and money, increase accuracy and speed.

High-end hardware for real-time 24/7 inferences

transformation in automotive industry

Discover how AI is helping shape the future

Cutting edge, 24/7 on premise inspections

See how AI helps us build safer workspaces

Object Detection, Segmentation, and Classification are the most common applications of Computer Vision technology. In order to decide which model to use for each of these applications, how to tune the training hyperparameters, whether regularization techniques are needed, etc., you have to be equipped with the right evaluation metrics. This article examines the different metrics used to evaluate machine vision models and the metrics implemented on the AIEX platform.

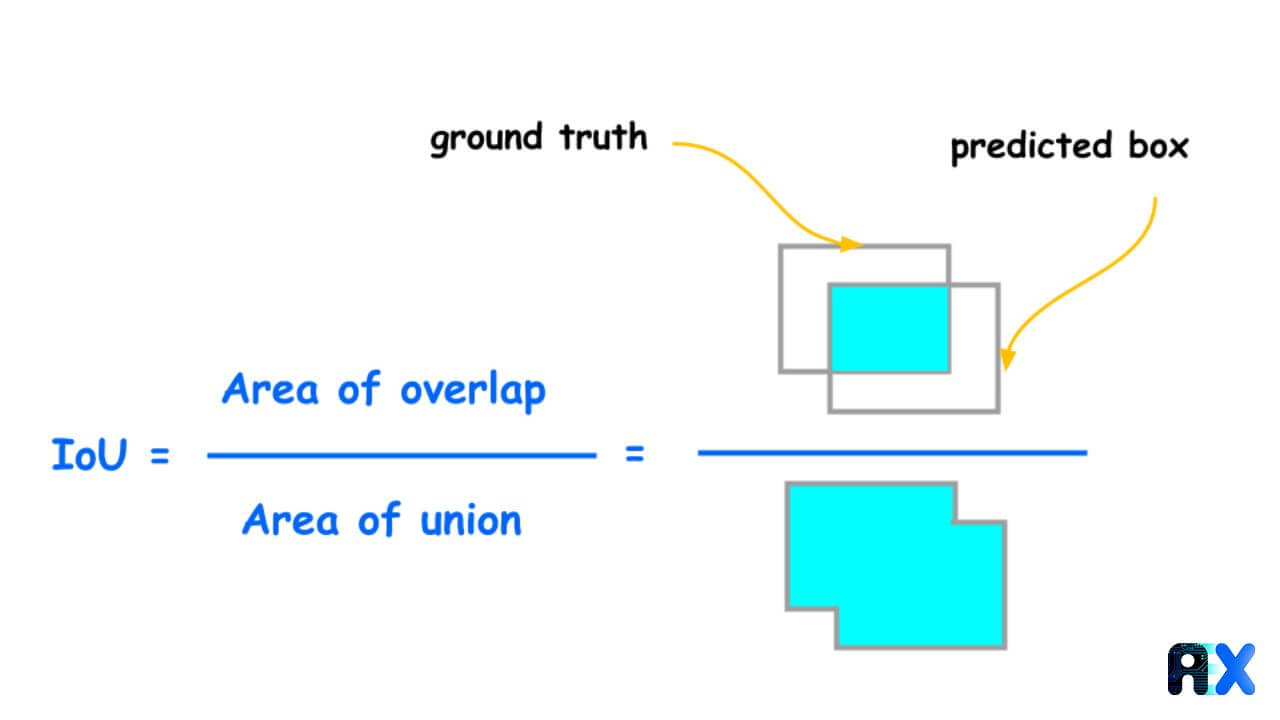

In object detection or segmentation problems, ground truth labels are masks representing a segment or a bounding box where the object is present. The IOU metric works by comparing the bounding box of the ground truth with the bounding box of the prediction. It can be expressed as follows:

IOU = Intersection of two bounding boxes / Area of Union

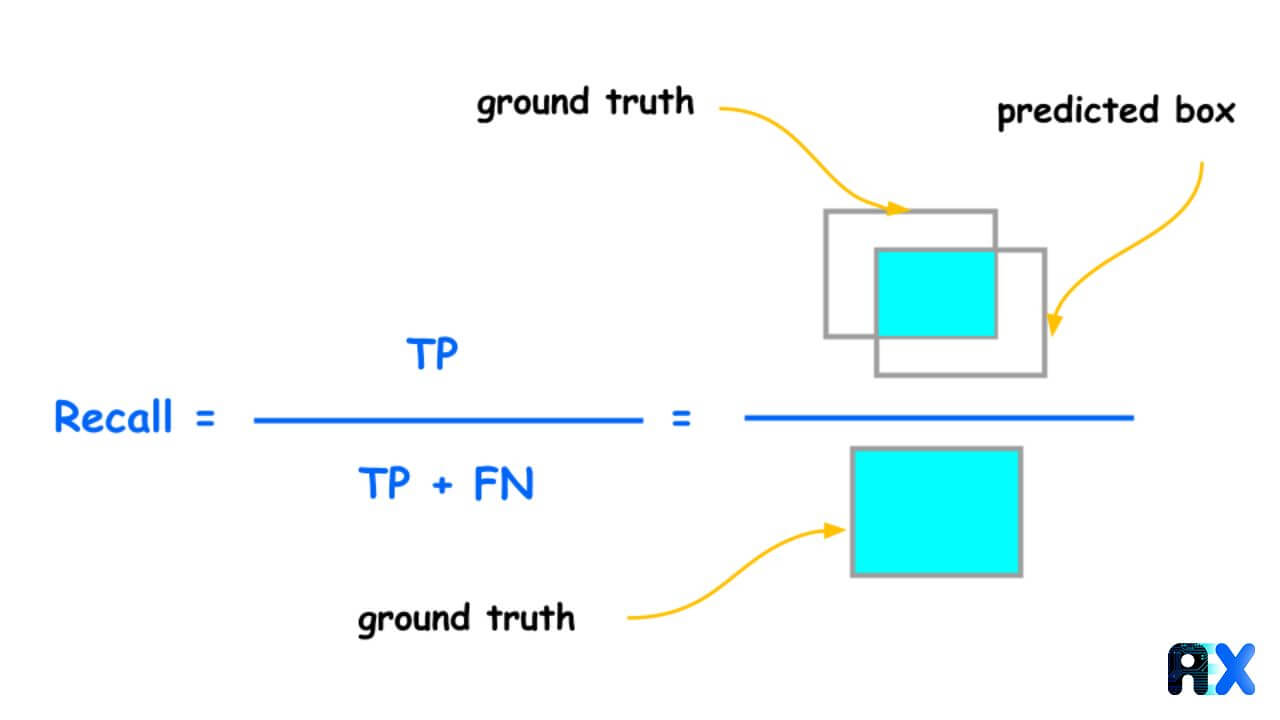

Recall is the ratio of the number of true positives to the total number of actual (relevant) objects. To put it more formally:

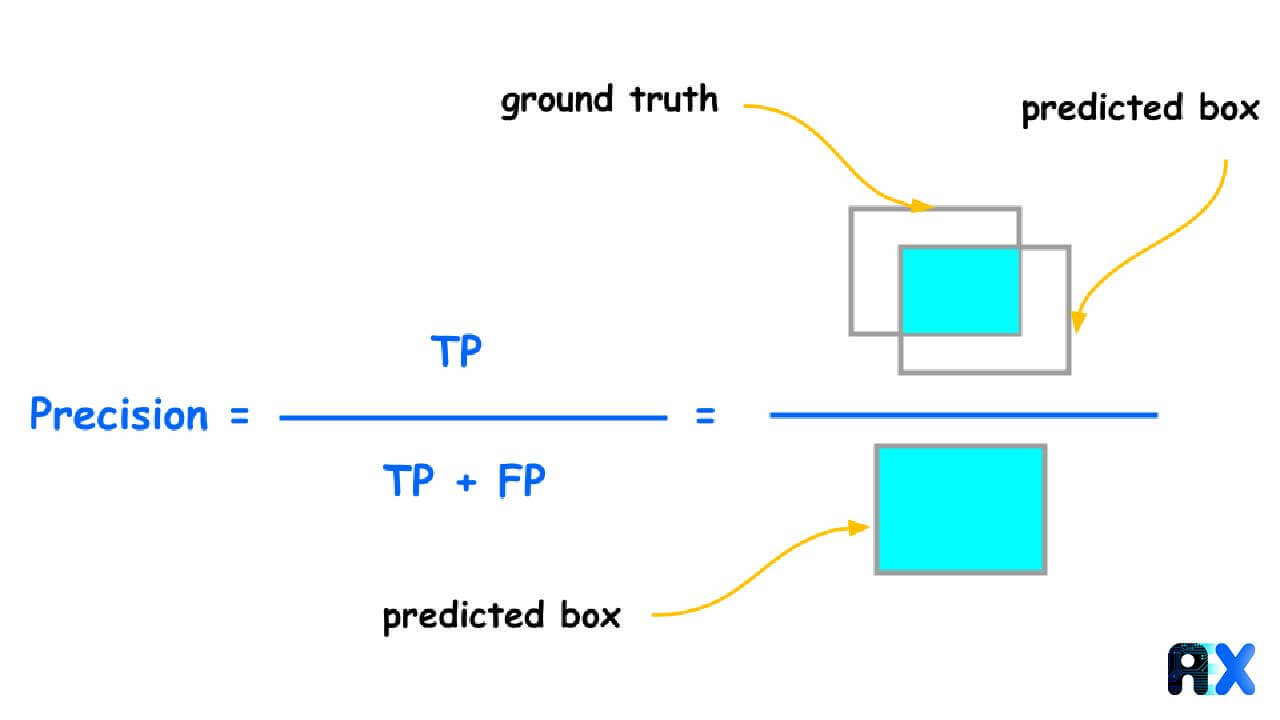

Precision is defined as the percentage of instances or samples that are correctly classified among the ones classified as positives. To calculate precision, we use the following formula:

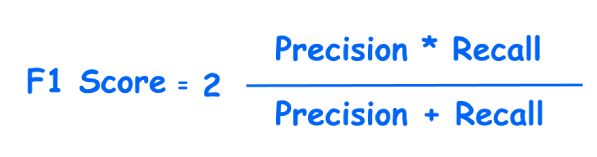

An F1 score is the harmonic mean of precision and recall, expressed as follows:

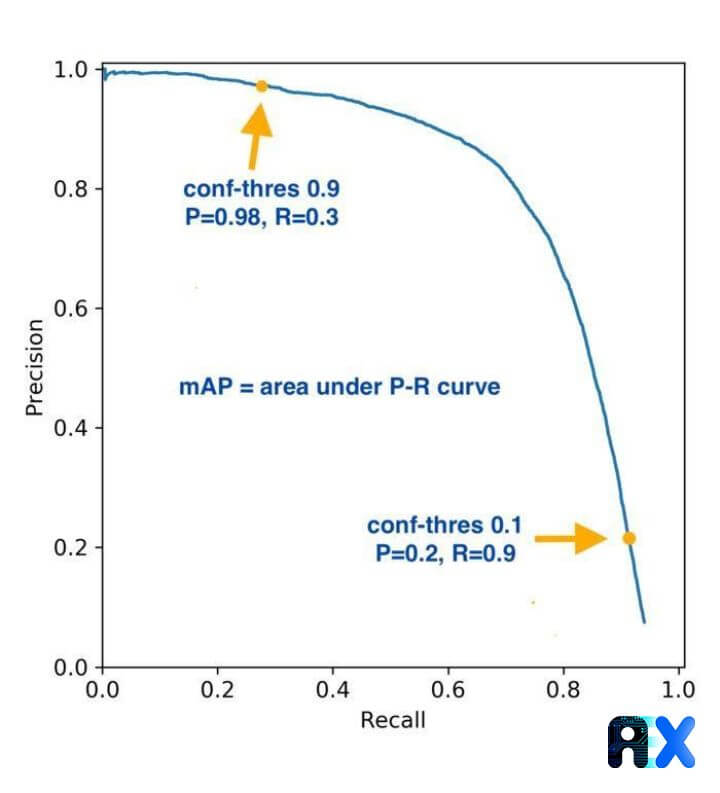

In most cases, we use precision-recall curves to evaluate the accuracy of the detection, but the Average Precision provides numerical values, which makes it easier to compare it to other models. AP summarizes the weighted mean of precisions for each threshold with increasing recall based on the precision-recall curve. In general, the Average Precision (AP) is the area under the precision-recall curve.

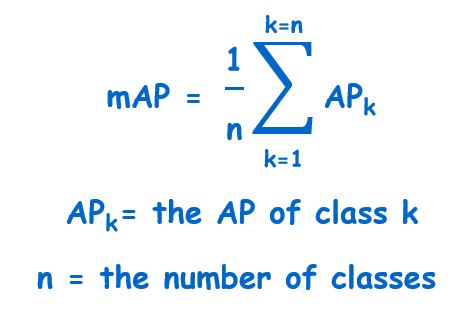

An extension of Average precision is Mean Average Precision. The average precision is calculated for individual objects, while the mAP is calculated for the entire model. The mAP method is used to determine the percentage of correct predictions in the model. mAP@0.5 is the mAP calculated at the IOU threshold of 0.5.

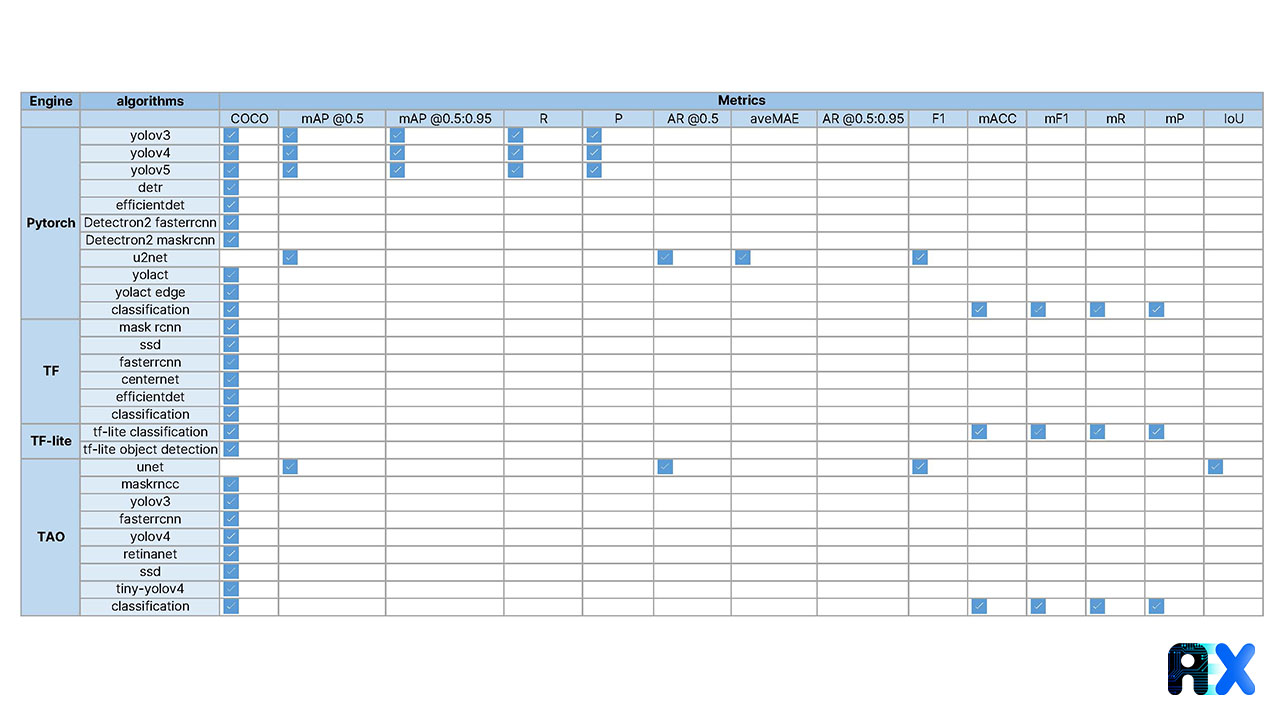

Evaluation metrics are an essential part of evaluating algorithms. There are a variety of metrics offered by the AIEX platform to enable users to evaluate training algorithms effectively. Among them, COCO metrics are recognized by the AIEX platform as one of the most comprehensive set of evaluation metrics. To find more details about COCO metrics follow this link.

Object detectors on COCO are evaluated by these 12 metrics:

Average Precision (AP):

AP Across Scales:

Average Recall (AR):

AR Across Scales:

AIEX platform metrics are shown below:

Precision = P

Recall = R

macro F1 = mF1

macro accuracy= mACC

macro recall=mR

macro precision=mP

mAP IoU-0.5 =mAP@0.5

mAP IoU-0.5:0.95 = mAP @0.5:0.95

AR IoU-0.5 = AR @0.5

AR IoU-0.5:0.95 = AR @0.5:0.95

You can enter your email address and subscribe to our newsletter and get the latest practical content. You can enter your email address and subscribe to our newsletter.

© 2022 Aiex.ai All Rights Reserved.